This post is part of an ongoing discussion with Ron Jeffries, which originated from some comments I made about #NoEstimates. You can read my original "17 Theses on Software Estimation" post here. That post has been completely subsumed by this post if you want to just read this one. You can read Ron's response to my original 17 Theses article here. This post doesn't respond to Ron's post per se. It has been expanded to address points he raised, but responses to him are more implicit than explicit.

Arriving late to the #NoEstimates discussion, I’m amazed at some of the assumptions that have gone unchallenged, and I’m also amazed at the absence of some fundamental points that no one seems to have made so far. The point of this article is to state unambiguously what I see as the arguments in favor of estimation in software and put #NoEstimates in context.

1. Estimation is often done badly and ineffectively and in an overly time-consuming way.

My company and I have taught upwards of 10,000 software professionals better estimation practices, and believe me, we have seen every imaginable horror story of estimation done poorly. There is no question that “estimation is often done badly” is a true observation of the state of the practice.

2. The root cause of poor estimation is usually lack of estimation skills.

Estimation done poorly is most often due to lack of estimation skills. Smart people using common sense is not sufficient to estimate software projects. Reading two page blog articles on the internet is not going to teach anyone how to estimate very well. Good estimation is not that hard, once you’ve developed the skill, but it isn’t intuitive or obvious, and it requires focused self-education or training.

One of the most common estimation problems is people engaging with so-called estimates that are not really Estimates, but that are really Business Targets or requests for Commitments. You can read more about that in my estimation book or watch my short video on Estimates, Targets, and Commitments.

3. Many comments in support of #NoEstimates demonstrate a lack of basic software estimation knowledge.

I don’t expect most #NoEstimates advocates to agree with this thesis, but as someone who does know a lot about estimation I think it’s clear on its face. Here are some examples

(a) Are estimation and forecasting the same thing? As far as software estimation is concerned, yes they are. (Just do a Google or Bing search of “definition of forecast”.) Estimation, forecasting, prediction--it's all the same basic activity, as far as software estimation is concerned.

(b) Is showing someone several pictures of kitchen remodels that have been completed for $30,000 and implying that the next kitchen remodel can be completed for $30,000 estimation? Yes, it is. That’s an implementation of a technique called Reference Class Forecasting.

(c) Is doing a few iterations, calculating team velocity, and then using that empirical velocity data to project a completion date count as estimation? Yes it does. Not only is it estimation, it is a really effective form of estimation. I’ve heard people argue that because velocity is empirically based, it isn’t estimation. Good estimation is empirically based, so that argument exposes a lack of basic understanding of the nature of estimation.

(d) Is counting the number of stories completed in each sprint rather than story points, calculating the average number of stories completed each sprint, and using that for sprint planning, estimation? Yes, for the same reasons listed in point (c).

(e) Most of the #NoEstimates approaches that have been proposed, including (c) and (d) above, are approaches that were defined in my book Software Estimation: Demystifying the Black Art, published in 2006. The fact that people people are claiming these long-ago-published techniques as "new" under the umbrella of #NoEstimates is another reason I say many of the #NoEstimates comments demonstrate a lack of basic software estimation knowledge.

(f) Is estimation time consuming and a waste of time? One of the most common symptoms of lack of estimation skill is spending too much time on ineffective activities. This work is often well-intentioned, but it’s common to see well-intentioned people doing more work than they need to get worse estimates than they could be getting.

(g) Is it possible to get good estimates? Absolutely. We have worked with multiple companies that have gotten to the point where they are delivering 90%+ of their projects on time, on budget, with intended functionality.

One reason many people find estimation discussions (aka negotiations) challenging is that they don't really believe the estimates they came up with themselves. Once you develop the skill needed to estimate well -- as well as getting clear about whether the business is really talking about an estimate, a target, or a commitment -- estimation discussions become more collaborative and easier.

4. Being able to estimate effectively is a skill that any true software professional needs to develop, even if they don’t need it on every project.

“Estimation often doesn't work very well, therefore software professionals should not develop estimation skill” – this is a common line of reasoning in #NoEstimates. This argument doesn't make any more sense than the argument, "Scrum often doesn't work very well, therefore software professionals should not try to use Scrum." The right response in both cases is, "Get better at the practice," not "Throw out the practice altogether."

#NoEstimates advocates say they're just exploring the contexts in which a person or team might be able to do a project without estimating. That exploration is fine, but until someone can show that the vast majority of projects do not need estimates at all, deciding to not estimate and not develop estimations skills is premature. And my experience tells me that when all the dust settles, the cases in which no estimates are needed will be the exception rather than the rule. Thus software professionals will benefit -- and their organizations will benefit -- from developing skill at estimation.

I would go further and say that a true software professional should develop estimation skill so that you can estimate competently on the numerous projects that require estimation. I don't make these claims about software professionalism lightly. I spent four years as chair of the IEEE committee that oversees software professionalism issues for the IEEE, including overseeing the Software Engineering Body of Knowledge, university accreditation standards, professional certification programs, and coordination with state licensing bodies. I spent another four years as vice-chair of that committee. I also wrote a book on the topic, so if you're interested in going into detail on software professionalism, you can check out my book, Professional Software Development. Or you can check out a much briefer, more specific explanation in my company's white paper about our Professional Development Ladder.

5. Estimates serve numerous legitimate, important business purposes.

Estimates are used by businesses in numerous ways, including:

- Allocating budgets to projects (i.e., estimating the effort and budget of each project)

- Making cost/benefit decisions at the project/product level, which is based on cost (software estimate) and benefit (defined feature set)

- Deciding which projects get funded and which do not, which is often based on cost/benefit

- Deciding which projects get funded this year vs. next year, which is often based on estimates of which projects will finish this year

- Deciding which projects will be funded from CapEx budget and which will be funded from OpEx budget, which is based on estimates of total project effort, i.e., budget

- Allocating staff to specific projects, i.e., estimates of how many total staff will be needed on each project

- Allocating staff within a project to different component teams or feature teams, which is based on estimates of scope of each component or feature area

- Allocating staff to non-project work streams (e.g., budget for a product support group, which is based on estimates for the amount of support work needed)

- Making commitments to internal business partners (based on projects’ estimated availability dates)

- Making commitments to the marketplace (based on estimated release dates)

- Forecasting financials (based on when software capabilities will be completed and revenue or savings can be booked against them)

- Tracking project progress (comparing actual progress to planned (estimated) progress)

- Planning when staff will be available to start the next project (by estimating when staff will finish working on the current project)

- Prioritizing specific features on a cost/benefit basis (where cost is an estimate of development effort)

These are just a subset of the many legitimate reasons that businesses request estimates from their software teams. I would be very interested to hear how #NoEstimates advocates suggest that a business would operate if you remove estimates for each of these purposes.

The #NoEstimates response to these business needs is typically of the form, “Estimates are inaccurate and therefore not useful for these purposes” rather than, “The business doesn’t need estimates for these purposes.”

That argument really just says that businesses are currently operating on the basis of much worse predictions than they should be, and probably making poorer decisions as a result, because the software staff are not providing very good estimates. If software staff provided more accurate estimates, the business would make better decisions in each of these areas, which would make the business stronger.

The other #NoEstimates response is that "Estimates are always waste." I don't agree with that. By that line of reasoning, daily stand ups are waste. Sprint planning is waste. Retrospectives are waste. Testing is waste. Everything but code-writing itself is waste. I realize there are Lean purists who hold those views, but I don't buy any of that.

Estimates, done well, support business decision making, including the decision not to do a project at all. Taking the #NoEstimates philosophy to its logical conclusion, if #NoEstimates eliminates waste, then #NoProjectAtAll eliminates even more waste. In most cases, the business will need an estimate to decide not to do the project at all.

In my experience businesses usually value predictability, and in many cases, they value predictability more than they value agility. Do businesses always need predictability? No, there are few absolutes in software. Do businesses usually need predictability? In my experience, yes, and they need it often enough that doing it well makes a positive contribution to the business. Responding to change is also usually needed, and doing it well also makes a positive contribution to the business. This whole topic is a case where both predictability and agility work better than either/or. Competency in estimation should be part of the definition of a true software professional, as should skill in Scrum and other agile practices.

6. Part of being an effective estimator is understanding that different estimation techniques should be used for different kinds of estimates. One thread that runs throughout the #NoEstimates discussions is lack of clarity about whether we’re estimating before the project starts, very early in the project, or after the project is underway. The conversation is also unclear about whether the estimates are project-level estimates, task-level estimates, sprint-level estimates, or some combination. Some of the comments imply ineffective attempts to combine kinds of estimates—the most common confusion I’ve read is trying to use task-level estimates to estimate a whole project, which is another example of lack of software estimation skill.

You can see a summary of estimation techniques and their areas of applicability here. This quick reference sheet assumes familiarity with concepts and techniques from my estimation book and is not intended to be intuitive on its own. But just looking at the categories you can see that different techniques apply for estimating size, effort, schedule, and features. Different techniques apply for small, medium, and large projects. Different techniques apply at different points in the software lifecycle, and different techniques apply to Agile (iterative) vs. Sequential projects. Effective estimation requires that the right kind of technique be applied to each different kind of estimate.

Learning these techniques is not hard, but it isn't intuitive. Learning when to use each technique, as well as learning each technique, requires some professional skills development.

When we separate the kinds of estimates we can see parts of projects where estimates are not needed. One of the advantages of Scrum is that it eliminates the need to do any sort of miniature milestone/micro-stone/task-based estimates to track work inside a sprint. If I'm doing sequential development without Scrum, I need those detailed estimates to plan and track the team's work. If I'm using Scrum, once I've started the sprint I don't need estimation to track the day-to-day work, because I know where I'm going to be in two weeks and there's no real value added by predicting where I'll be day-by-day within that two week sprint.

That doesn't eliminate the need for estimates in Scrum entirely, however. I still need an estimate during sprint planning to determine how much functionality to commit to for that sprint. Backing up earlier in the project, before the project has even started, businesses need estimates for all the business purposes described above, including deciding whether to do the project at all. They also need to decide how many people to put on the project, how much to budget for the project, and so on. Treating all the requirements as emergent on a project is fine for some projects, but you still need to decide whether you're going to have a one-person team treating requirements as emergent, or a five-person team, or a 50-person team. Defining team size in the first place requires estimation.

7. Estimation and planning are not the same thing, and you can estimate things that you can’t plan. Many of the examples given in support of #NoEstimates are actually indictments of overly detailed waterfall planning, not estimation. The simple way to understand the distinction is to remember that planning is about “how” and estimation is about “how much.”

Can I “estimate” a chess game, if by “estimate” I mean how each piece will move throughout the game? No, because that isn’t estimation; it’s planning; it’s “how.”

Can I estimate a chess game in the sense of “how much”? Sure. I can collect historical data on the length of chess games and know both the average length and the variation around that average and predict the length of a game.

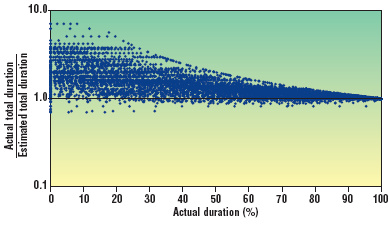

More to the point, estimating an individual software project is not analogous to estimating one chess game. It’s analogous to estimating a series of chess games. People who are not skilled in estimation often assume it’s more difficult to estimate a series of games than to estimate an individual game, but estimating the series is actually easier. Indeed, the more chess games in the set, the more accurately we can estimate the set, once you understand the math involved.

This all goes back to the idea that we need estimates for different purposes at different points in a project. An agile project may be about "steering" rather than estimating once the project gets underway. But it may not be allowed to get underway in the first place if there aren't early estimates that show there's a business case for doing the project.

8. You can estimate what you don’t know, up to a point. In addition to estimating “how much,” you can also estimate “how uncertain.” In the #NoEstimates discussions, people throw out lots of examples along the lines of, “My project was doing unprecedented work in Area X, and therefore it was impossible to estimate the whole project.” This is essentially a description of the common estimation mistake of allowing high variability in one area to insert high variability into the whole project's estimate rather than just that one area's estimate.

Most projects contain a mix of precedented and unprecedented work (also known as certain/uncertain, high risk/low risk, predictable/unpredictable, high/low variability--all of which are loose synonyms as far as estimation is concerned). Decomposing the work, estimating uncertainty in each area, and building up an overall estimate that includes that uncertainty proportionately is one technique for dealing with uncertainty in estimates.

Why would that ever be needed? Because a business that perceives a whole project as highly risky might decide not to approve the whole project. A business that perceives a project as low to moderate risk overall, with selected areas of high risk, might decide to approve that same project.

9. Both estimation and control are needed to achieve predictability. Much of the writing on Agile development emphasizes project control over project estimation. I actually agree that project control is more powerful than project estimation, however, effective estimation usually plays an essential role in achieving effective control.

To put this in Agile Manifesto-like terms:

We have come to value project control over project estimation,

as a means of achieving predictability

As in the Agile Manifesto, we value both terms, which means we still value the term on the right.

#NoEstimates seems to pay lip service to both terms, but the emphasis from the hashtag onward is really about discarding the term on the right. This is another case where I believe the right answer is both/and, not either/or.

I wrote an essay when I was Editor in Chief of IEEE Software called "Sitting on the Suitcase" that discussed the interplay between estimation and control and discussed why we estimate even though we know the activity has inherent limitations. This is still one of my favorite essays.

10. People use the word "estimate" sloppily. No doubt. Lack of understanding of estimation is not limited to people tweeting about #NoEstimates. Business partners often use the word “estimate” to refer to what would more properly be called a “planning target” or “commitment.”

The word "estimate" does have a clear definition, for those who want to look it up.

The gist of these definitions is that an "estimate" is something that is approximate, rough, or tentative, and is based upon impressions or opinion. People don't always use the word that way, and you can see my video on that topic here.

Because people use the word sloppily, one common mistake software professionals make is trying to create a predictive, approximate estimate when the business is really asking for a commitment, or asking for a plan to meet a target, but using the word “estimate” to ask for that. It's common for businesses to think they have a problem with estimation when the bigger problem is with their commitment process.

We have worked with many companies to achieve organizational clarity about estimates, targets, and commitments. Clarifying these terms makes a huge difference in the dynamics around creating, presenting, and using software estimates effectively.

11. Good project-level estimation depends on good requirements, and average requirements skills are about as bad as average estimation skills. A common refrain in Agile development is “It’s impossible to get good requirements,” and that statement has never been true. I agree that it’s impossible to get perfect requirements, but that isn’t the same thing as getting good requirements. I would agree that “It is impossible to get good requirements if you don’t have very good requirement skills,” and in my experience that is a common case. I would also agree that “Projects usually don’t have very good requirements,” as an empirical observation—but not as a normative statement that we should accept as inevitable.

Like estimation skill, requirements skill is something that any true software professional should develop, and the state of the art in requirements at this time is far too advanced for even really smart people to invent everything they need to know on their own. Like estimation skill, a person is not going to learn adequate requirements skills by reading blog entries or watching short YouTube videos. Acquiring skill in requirements requires focused, book-length self-study or explicit training or both.

If your business truly doesn’t care about predictability (and some truly don’t), then letting your requirements emerge over the course of the project can be a good fit for business needs. But if your business does care about predictability, you should develop the skill to get good requirements, and then you should actually do the work to get them. You can still do the rest of the project using by-the-book Scrum, and then you’ll get the benefits of both good requirements and Scrum.

From my point of view, I often see agile-related claims that look kind of like this, What practices should you use if you have:

- Mediocre skill in Estimation

- Mediocre skill in Requirements

- Good to excellent skill in Scrum and Related Practices

Not too surprisingly, the answer to this question is, Scrum and Related Practices. I think a more interesting question is, What practices should you use if you have:

- Good to excellent skill in Estimation

- Good to excellent skill in Requirements

- Good to excellent skill in Scrum and related practices

Having competence in multiple areas opens up some doors that will be closed with a lesser skill set. In particular, it opens up the ability to favor predictability if your business needs that, or to favor flexibility if your business needs that. Agile is supposed to be about options, and I think that includes the option to develop in the way that best supports the business.

12. The typical estimation context involves moderate volatility and a moderate levels of unknowns

Ron Jeffries writes, “It is conventional to behave as if all decent projects have mostly known requirements, low volatility, understood technology, …, and are therefore capable of being more or less readily estimated by following your favorite book.” I don’t know who said that, but it wasn’t me, and I agree with Ron that that statement doesn’t describe most of the projects that I have seen.

I think it would be more true to say, “The typical software project has requirements that are knowable in principle, but that are mostly unknown in practice due to insufficient requirements skills; low volatility in most areas with high volatility in selected areas; and technology that tends to be either mostly leading edge or mostly mature." In other words, software projects are challenging, but the challenge level is manageable. If you have developed the full set of skills a software professional should have, you will be able to overcome most of the challenges or all of them.

Of course there is a small percentage of projects that do have truly unknowable requirements and across-the-board volatility. I consider those to be corner cases. It’s good to explore corner cases, but also good not to lose sight of which cases are most common.

13. Responding to change over following a plan does not imply not having a plan. It’s amazing that in 2015 we’re still debating this point. Many of the #NoEstimates comments literally emphasize not having a plan, i.e., treating 100% of the project as emergent. They advocate a process—typically Scrum—but no plan beyond instantiating Scrum.

According to the Agile Manifesto, while agile is supposed to value responding to change, it also is supposed to value following a plan. The Agile Manifesto says, "there is value in the items on the right" which includes the phrase "following a plan."

While I agree that minimizing planning overhead is good project management, doing no planning at all is inconsistent with the Agile Manifesto, not acceptable to most businesses, and wastes some of Scrum's capabilities. One of the amazingly powerful aspects of Scrum is that it gives you the ability to respond to change; that doesn’t imply that you need to avoid committing to plans in the first place.

My company and I have seen Agile adoptions shut down in some companies because an Agile team is unwilling to commit to requirements up front or refuses to estimate up front. As a strategy, that’s just dumb. If you fight your business about providing estimates, even if you win the argument that day, you will still get knocked down a peg in the business’s eyes.

I've commented in other contexts that I have come to the conclusion that most businesses would rather be wrong than vague. Businesses prefer to plant a stake in the ground and move it later rather than avoiding planting a stake in the ground in the first place. The assertion that businesses value flexibility over predictability is Agile's great unvalidated assumption. Some businesses do value flexibility over predictability, but most do not. If in doubt, ask your business.

If your business does value predictability, use your velocity to estimate how much work you can do over the course of a project, and commit to a product backlog based on your demonstrated capacity for work. Your business will like that. Then, later, when your business changes its mind—which it probably will—you’ll still be able to respond to change. Your business will like that even more.

14. Scrum provides better support for estimation than waterfall ever did, and there does not have to be a trade off between agility and predictability. Some of the #NoEstimates discussion seems to interpret challenges to #NoEstimates as challenges to the entire ecosystem of Agile practices, especially Scrum. Many of the comments imply that estimation will somehow impair agility. The examples cited to support that are mostly examples of unskilled misapplications of estimation practices, so I see them as additional examples of people not understanding estimation very well.

The idea that we have to trade off agility to achieve predictability is a false trade off. If we define "agility" to mean, "no notion of our destination" or "treat all the requirements on the project as emergent," then of course there is a trade off, by definition. If, on the other hand, we define "agility" as "ability to respond to change," then there doesn't have to be any trade off. Indeed, if no one had ever uttered the word “agile” or applied it to Scrum, I would still want to use Scrum because of its support for estimation and predictability, as well as for its support for responding to change.

The combination of story pointing, velocity calculation, product backlog, short iterations, just-in-time sprint planning, and timely retrospectives after each sprint creates a nearly perfect context for effective estimation. To put it in estimation terminology, story pointing is a proxy based estimation technique. Velocity is calibrating the estimate with project data. The product backlog (when constructed with estimation in mind) gives us a very good proxy for size. Sprint planning and retrospectives give us the ability to "inspect and adapt" our estimates. All this means that Scrum provides better support for estimation than waterfall ever did.

If a company truly is operating in a high uncertainty environment, Scrum can be an effective approach. In the more typical case in which a company is operating in a moderate uncertainty environment, Scrum is well-equipped to deal with the moderate level of uncertainty and provide high predictability (e.g., estimation) at the same time.

15. There are contexts where estimates provide little value. I don’t estimate how long it will take me to eat dinner, because I know I’m going to eat dinner regardless of what the estimate says. If I have a defect that keeps taking down my production system, the business doesn’t need an estimate for that because the issue needs to get fixed whether it takes an hour, a day, or a week.

The most common context I see where estimates are not done on an ongoing basis and truly provide little business value is online contexts, especially mobile, where the cycle times are measured in days or shorter, the business context is highly volatile, and the mission truly is, “Always do the next most useful thing with the resources available.”

In both these examples, however, there is a point on the scale at which estimates become valuable. If the work on the production system stretches into weeks or months, the business is going to want and need an estimate. As the mobile app matures from one person working for a few days to a team of people working for a few weeks, with more customers depending on specific functionality, the business is going to want more estimates. As the group doing the work expands, they'll need budget and headcount, and those numbers are determined by estimates. Enjoy the #NoEstimates context while it lasts; don’t assume that it will last forever.

16. This is not religion. We need to get more technical and more economic about software discussions. I’ve seen #NoEstimates advocates treat these questions of requirements quality, estimation effectiveness, agility, and predictability as value-laden moral discussions. "Agile" is a compliment and "Waterfall" is an invective. The tone of the argument is more moral than economic. The arguments are of the form, "Because this practice is good," rather than of the form, "Because this practice supports business goals X, Y, and Z."

That religion isn’t unique to Agile advocates, and I’ve seen just as much religion on the non-Agile sides of various discussions. It would be better for the industry at large if people could stay more technical and economic more often.

Agile is About Creating Options, Right?

I subscribe to the idea that engineering is about doing for a dime what any fool can do for a dollar, i.e., it's about economics. If we assume professional-level skills in agile practices, requirements, and estimation, the decision about how much work to do up front on a project should be an economic decision about which practices will achieve the business goals in the most cost-effective way. We consider issues including the cost of changing requirements and the value of predictability. If the environment is volatile and a high percentage of requirements are likely to spoil before they can be implemented, then it’s a bad economic decision to do lots of up front requirements work. If predictability provides little or no business value, emphasizing up front estimation work would be a bad economic decision.

On the other hand, if predictability does provide business value, then we should support that in a cost-effective way. If we do a lot of the requirements work up front, and some requirements spoil, but most do not, and that supports improved predictability, that would be a good economic choice.

The economics of these decisions are affected by the skills of the people involved. If my team is great at Scrum but poor at estimation and requirements, the economics of up front vs. emergent will tilt toward Scrum. If my team is great at estimation and requirements but poor at Scrum, the economics will tilt toward estimation and requirements.

Of course, skill sets are not divinely dictated or cast in stone; they can be improved through focused self-study and training. So we can treat the decision to invest in skills development as an economic issue too.

Decision to Develop Skills is an Economic Decision Too

What is the cost of training staff to reach competency in estimation and requirements? Does the cost of achieving competency exceed the likely benefits that would derive from competency? That goes back to the question of how much the business values predictability. If the business truly places no value on predictability, there won’t be any ROI from training staff in practices that support predictability. But I do not see that as the typical case.

My company and I can train software professionals to approach competency in both requirements and estimation in about a week. In my experience most businesses place enough value on predictability that investing a week to make that option available provides a good ROI to the business. Note: this is about making the option available, not necessarily exercising the option on every project.

My company and I can also train software professionals to approach competency in a full complement of Scrum and other Agile technical practices in about a week. That produces a good ROI too. In any given case, I would recommend both sets of training. If I had to recommend only one or the other, sometimes I would recommend starting with the Agile practices. But my real recommendation is to "embrace the and" and develop both sets of skills.

For context about training software professionals to "approach competency" in requirements, estimation, Scrum, and other Agile practices, I am using that term based on work we've done with our Professional Development Ladder. In that ladder we define capability levels of "Introductory," "Competence," "Leadership," and "Mastery." A few days of classroom training will advance most people beyond Introductory and much of the way toward Competence in a particular skill. Additional hands-on experience, mentoring, and feedback will be needed to cement Competence in an area. Classroom study is just one way to acquire these skills. Self-study or working with an expert mentor can work about as well. The skills aren't hard to learn, but they aren't self-evident either. As I've said above, the state of the art in estimation, requirements, and agile practices has moved well beyond what even a smart person can discover on their own. Focused professional development of some kind or other is needed to acquire these skills.

Is a week enough to accomplish real competency? My company has been training software professionals for almost 20 years, and our consultants have trained upwards of 50,000 software professionals during that time. All of our consultants are highly experienced software professionals first, trainers second. We don't have any methodological ax to grind, so we focus on what is best for each individual client. We all work hands-on with clients so we know what is actually working on the ground and what isn't, and that experience feeds back into our training. We have also also invested heavily in training our consultants to be excellent trainers. As a result, our service quality is second to none, and we can make a tremendous amount of progress with a few days of training. Of course additional coaching, mentoring and support are always helpful.

17. Agility plus predictability is better than agility alone.

Skills development in practices that support estimation and predictability vs. practices that support agility is not an either/or choice. A truly agile business would be able to be flexible when needed, or predictable when needed. A true software professional will be most effective when skilled in both skill sets.

If you think your business values agility only, ask your business what it values. Businesses vary, and you might work in a business that truly does value agility over predictability or that values agility exclusively. Many businesses value predictability over agility, however, so don't just assume it's one or the other.

I think it’s self-evident that a business that has both agility and predictability will outperform a business that has agility only. With today's powerful agile practices, especially Scrum, there's no reason we can't have both.

Overall, #NoEstimates seems like the proverbial solution in search of a problem. I don't see businesses clamoring to get rid of estimates. I see them clamoring to get better estimates. The good news for them is that agile practices, Scrum in particular, can provide excellent support for agility and estimation at the same time.

My closing thought, in this hash tag-happy discussion, is that #AgileWithEstimationWorksBest -- and #EstimationWithAgileWorksBest too.

Resources

]]>