They can cause layout shifts, on mobile the banner is sometimes the element for Largest Contentful Paint (LCP), they visually obscure other parts of the page, and if implemented correctly only some of the page's resources will be fetched.

When analysing sites one of my first steps is to work out how to bypass any consent banners so I can get a more complete view of page performance.

This post covers some of the performance issues related to consent banners, how I bypass the banners, and my approach to working out which cookies or localStorage items I need to set to bypass them.

Simon Hearne wrote about Measuring Performance behind Content Popups in May 2020, and while there's some overlap I'd recommend you read Simon's post too.

Challenges that Banners Bring

Layout Shifts

Some banners are displayed at the top of the page before other content and there's a danger that if the banner is inserted after rendering starts, the content below them will shift downwards.

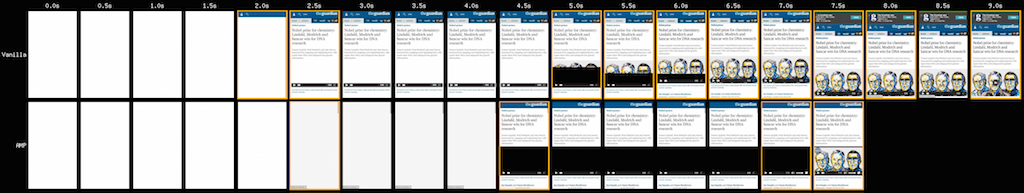

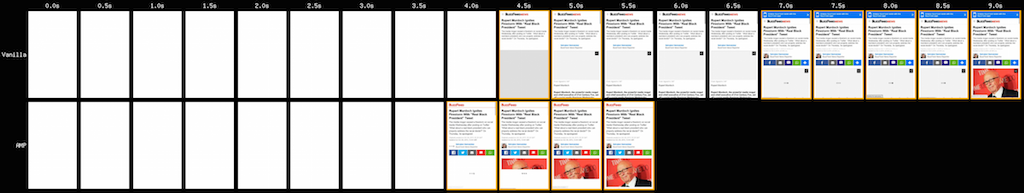

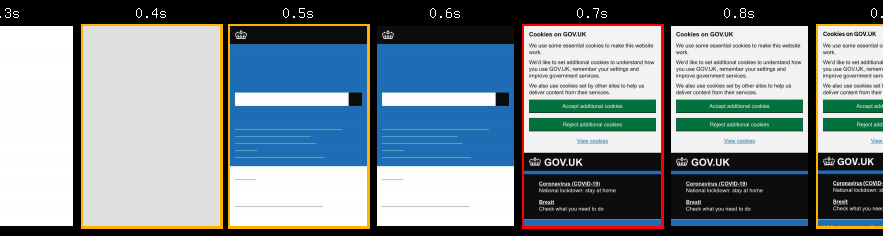

In a filmstrip of gov.uk there's layout shift at 0.7s when the consent banner is displayed (they have plans to address the issue in the future)

gov.uk – London, Chrome, Cable

gov.uk – London, Chrome, Cable

One way of eliminating this shift would be to adopt the approach Zach Leatherman took with a banner on Netlify and include them directly in page but hide then when they're not required.

Another is to use a pop-over approach where the consent banner is positioned over the page content.

Largest Contentful Paint

On some pages, and particularly on mobile, parts of the consent banner get detected as the Largest Content Paint.

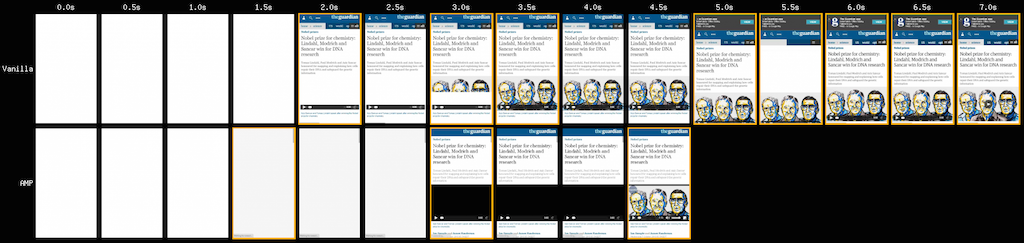

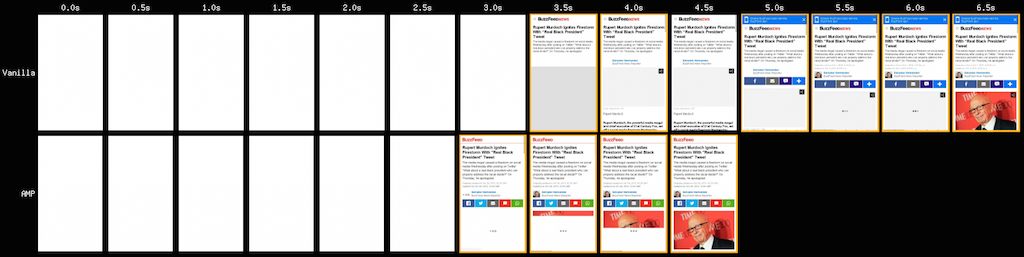

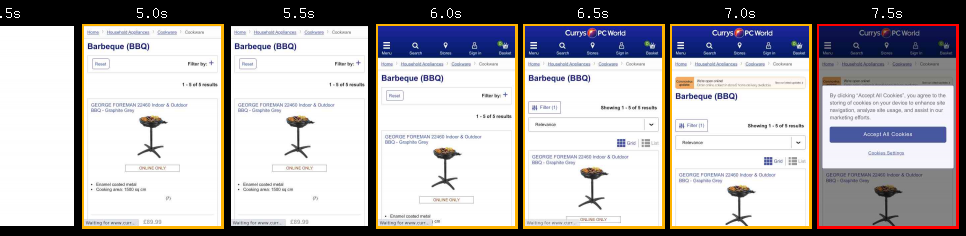

Currys PC World have this issue on their category pages, but not on their product pages, so removing the banner is important if we want get comparable measurements between the different page types.

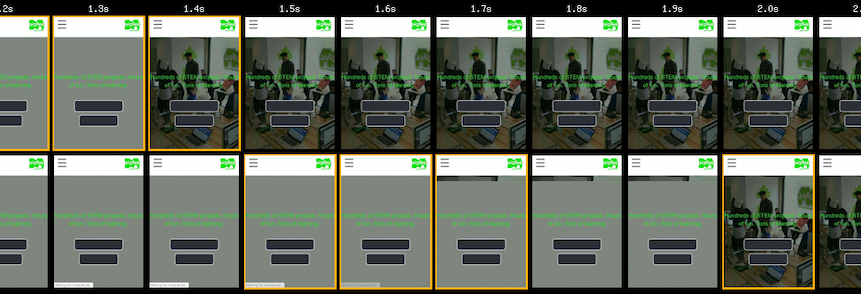

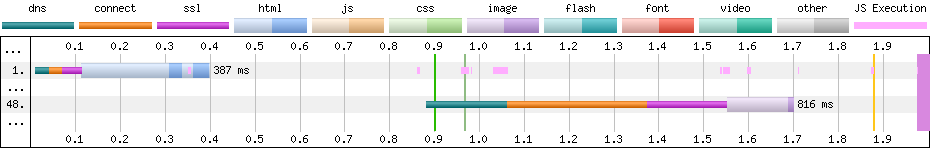

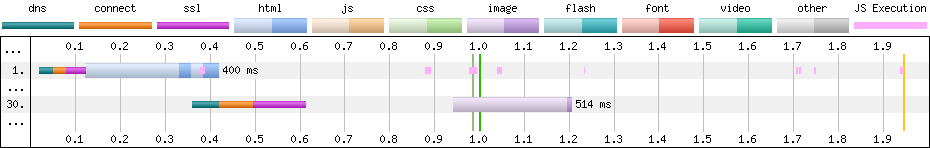

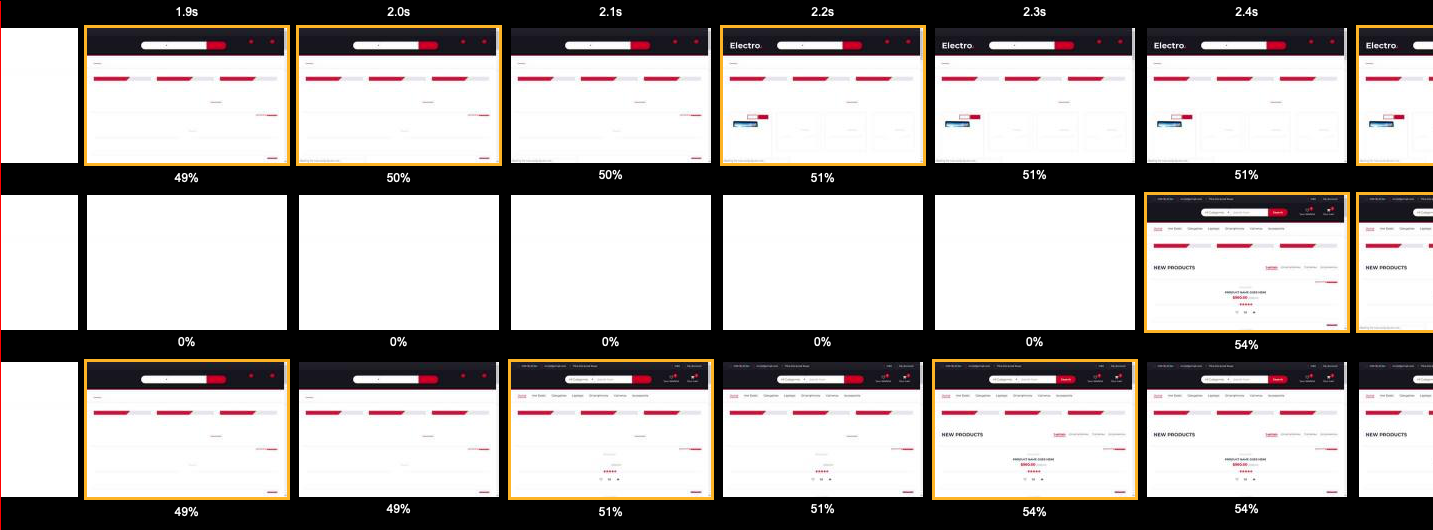

Currys PC World – London, Chrome, Cable

Currys PC World – London, Chrome, Cable

There are other sites with very similar banners but yet another element is counted as the Largest Contentful Paint, so checking which element is being used is important.

Obscure Key Content

Filmstrips are a powerful way to convey performance to anyone regardless of their web performance knowledge and I rely on them to help clients understand what the current experience and to demonstrate how it improves as optimisations are implemented.

And as consent banners can cover up the key content, they just get in the way!

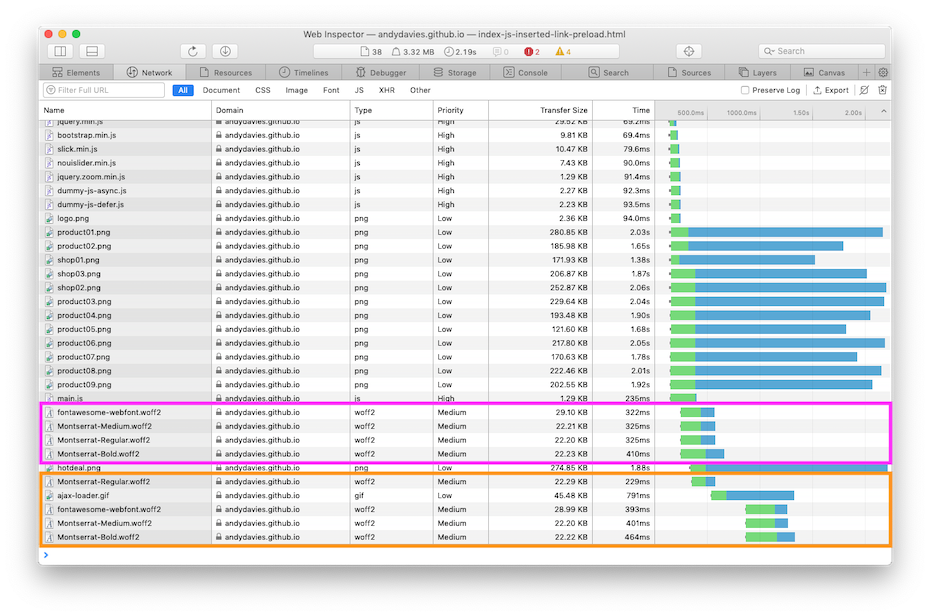

Partial Measurement

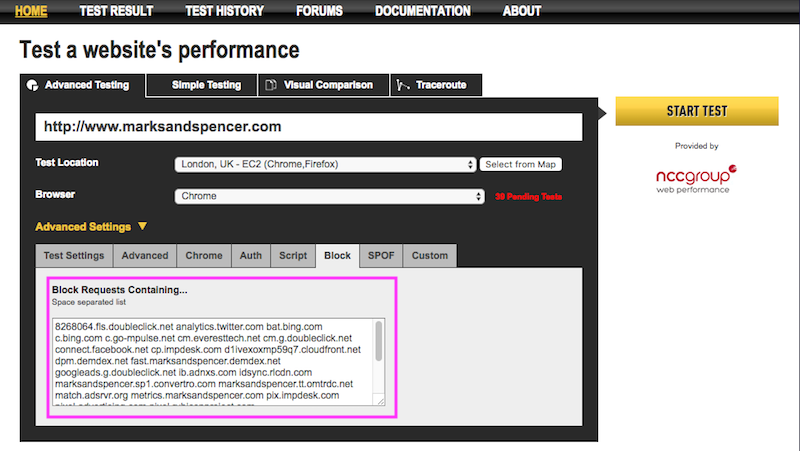

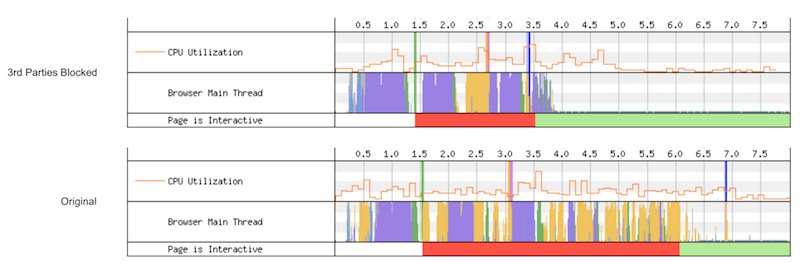

In countries where opt-in consent is required for 3rd-party data collection, the banner should delay the load of such scripts until consent is given.

These extra scripts influence performance – at the very least they'll increase the total bytes downloaded but often they'll also introduce long tasks, layout shifts and other behaviour that affects performance metrics too.

Bypassing Cookie Consent

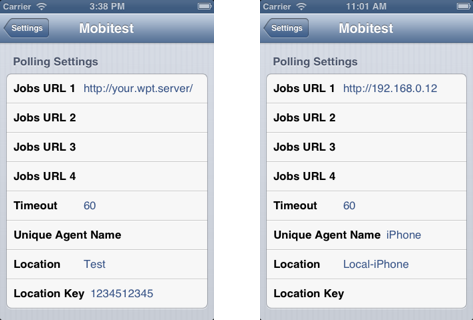

Some sites add a 'developer option' to their consent banners, for example a query string parameter that prevents the banner from being displayed.

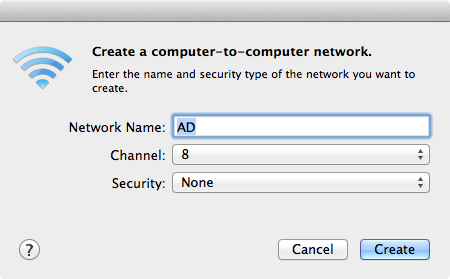

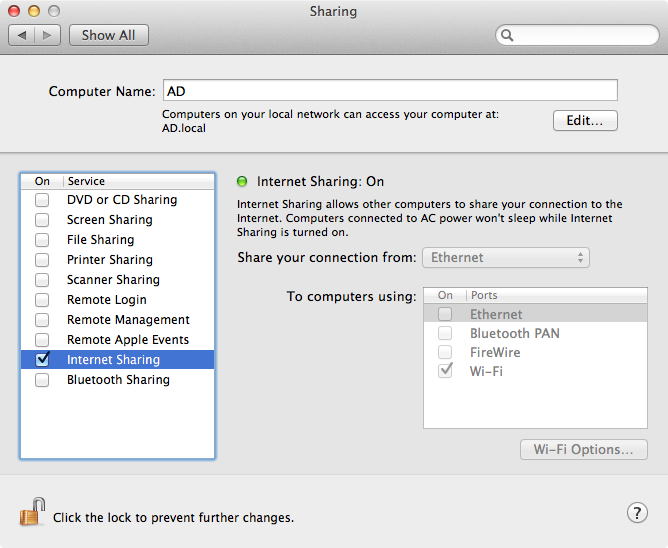

This makes testing with and without consent banners much easier but often this option doesn't exist, and sometimes banners are provided by a third-party services so typically in these cases cookies need to be set to avoid them.

Lighthouse

By default, Lighthouse doesn't support cookies, so web.dev/measure, Page Speed Insights etc. will test the site with the consent banner shown.

And if the consent banner is configured correctly then in opt-in e.g. GDPR, regions the Lighthouse score will be based on a partial page load i.e. without any 3rd-party tags.

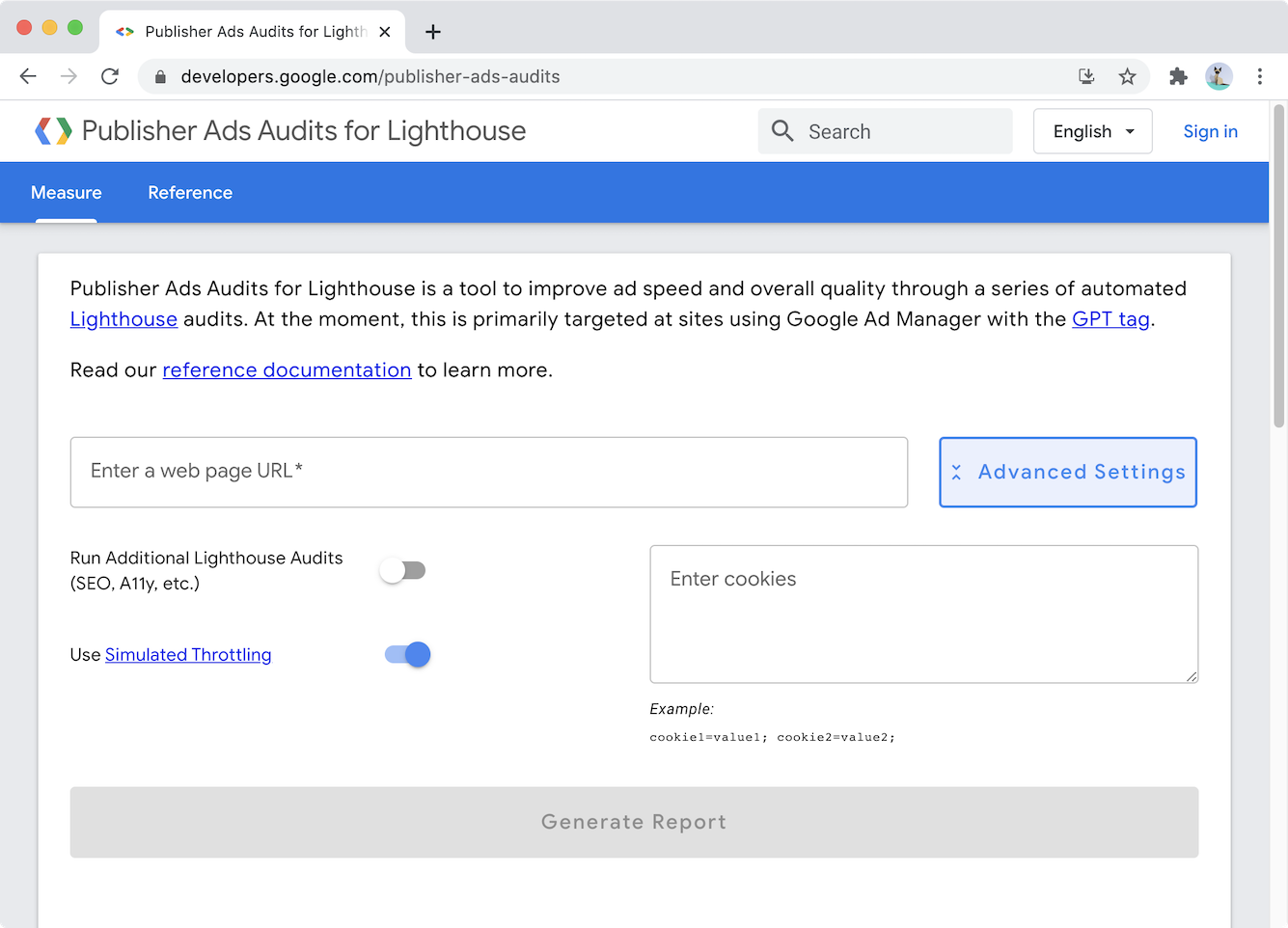

But Publisher Ad Audits for Lighthouse does allow cookies to be set – click on the Advanced Settings button, and paste cookie in the relevant field.

If you need a score or advice on how to improve using this version of Lighthouse is a quick way to get that.

Cookies can also be set in Lighthouse CLI and CI

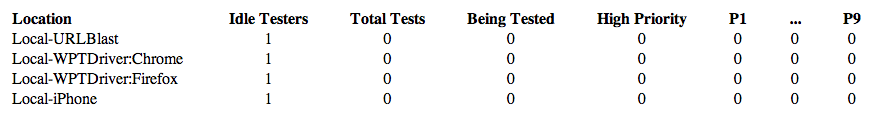

WebPageTest

Often I want more than Lighthouse provides…

I want waterfalls so I can dig into network performance, filmstrips to demonstrate the benefits of optimisations, and Request Maps help understand the 3rd-parties on a page.

And most of the time I use WebPageTest to generate this data with one of these two approaches for bypassing consent banners.

I either set cookies via the setCookie script command:

setCookie https://%HOST% cookie_name=...

navigate %URL%Note: %URL% and %HOST% are WebPageTest script variables that will be replaced with the relevant part of the URL being tested. One day I'll submit a PR to add %ORIGIN% so https://%HOST% can be replaced with something more friendly.

Or via an injected script:

document.cookie='cookie_name=...';

Something to consider is there's a slight timing difference as to when the cookie is set between the two WebPageTest approaches.

In the first approach, the cookie is set before navigation, so the cookie is available to any inline scripts that are early in the page, or any server-side processes that might generate an inline cookie banner.

Whereas the second approach sets the cookie after the browser starts to receive the HTML content, which might be too late for some cookie banners but allows extra commands such as setting localStorage items to be added.

Another advantage of the injected script approach is the script can also be tested in the DevTools console – clear storage, execute the script in the console, then reload the page and if the script's correct the consent banner shouldn't appear.

Sometimes Cookies aren't Enough

Some consent banners – IAB EU Consent Management Providers (CMPs) such as Quantcast Choice – also use local storage so just setting cookies isn't enough to bypass them.

To bypass these types of consent banners, I rely on injecting a custom script in WebPageTest.

For Quantcast Choice, the injected script looks like this (values omitted):

localStorage.setItem('CMPList', '...');

localStorage.setItem('noniabvendorconsent', '...');

localStorage.setItem('_cmpRepromptHash', '...');

document.cookie = 'euconsent-v2=...;'

document.cookie = 'addtl_consent=...;'

Creating these scripts for each site that uses Quantcast got pretty boring pretty quickly, so I've started using a DevTools snippet to generate the script.

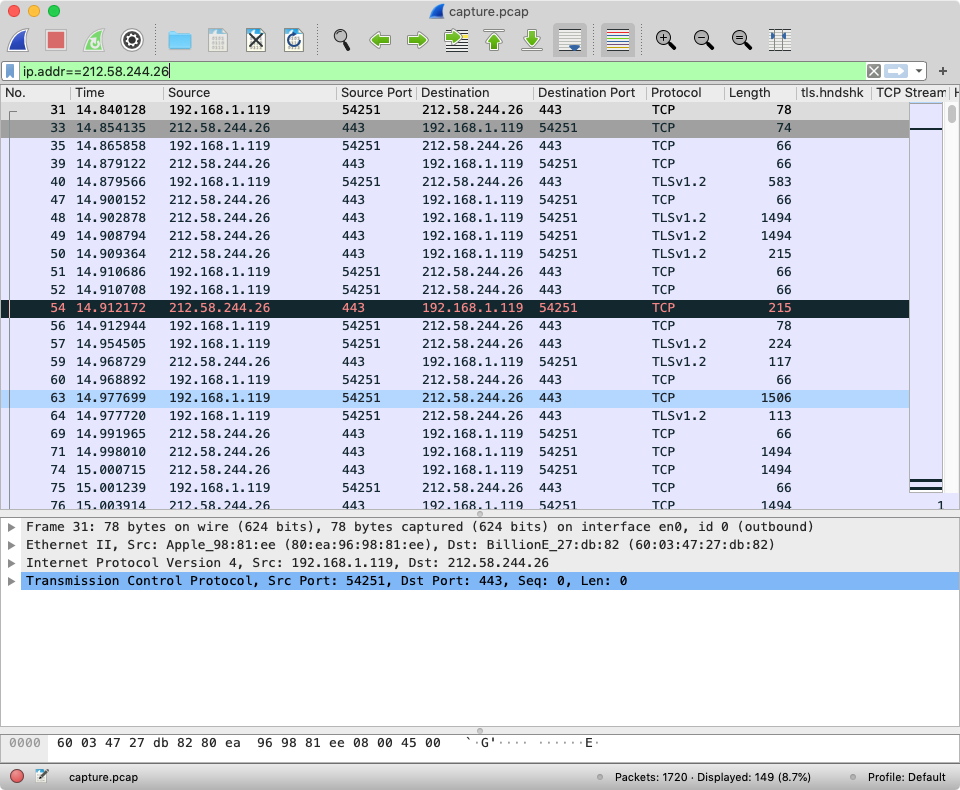

Running the snippet in DevTools produces a script ready to paste into WebPageTest's Inject Script field (which is at the bottom of the Advanced tab)

I'll probably add snippets for other common CMPs as I come across them at clients and prospects, but Pull Requests are also very welcome!

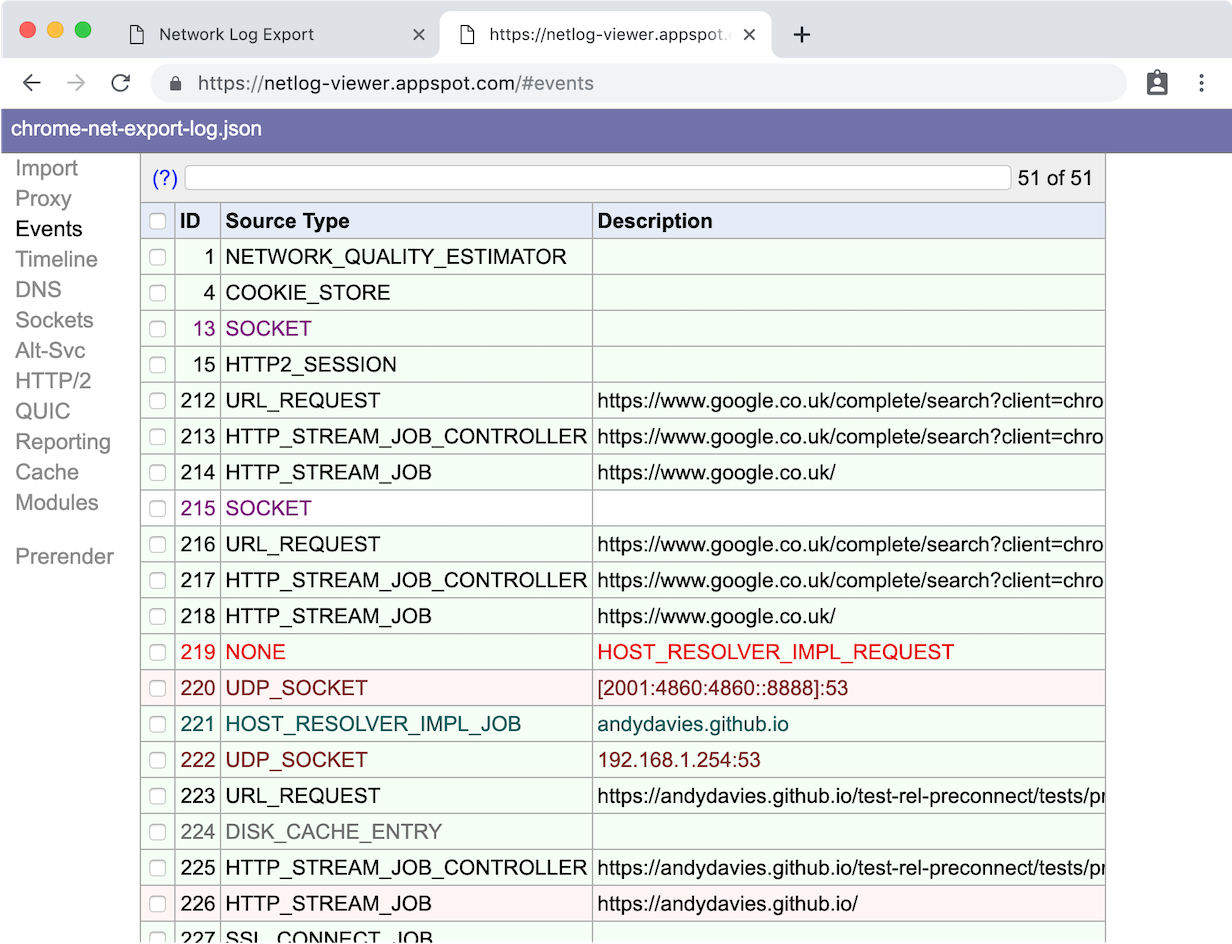

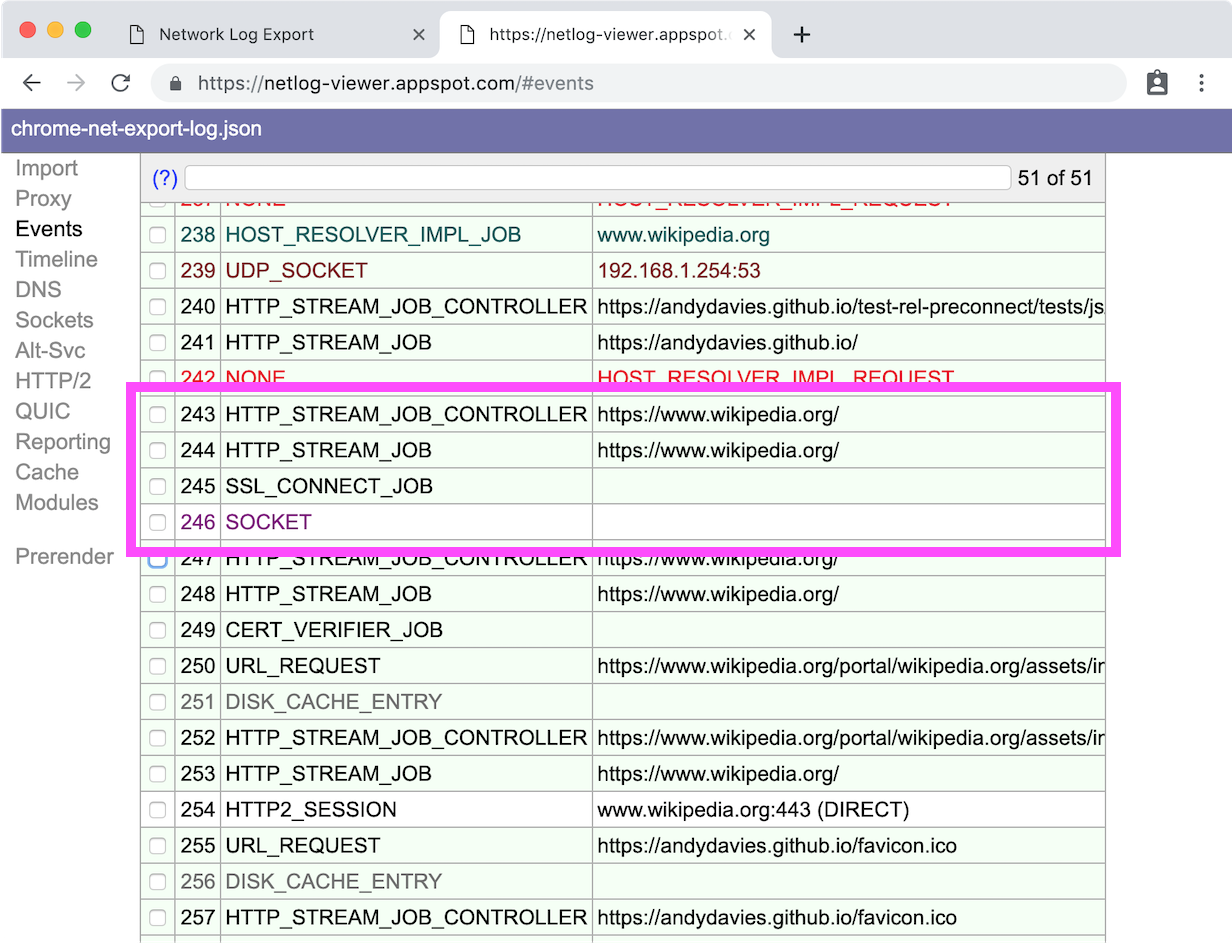

Determining the Combination of Cookies and localStorage Items

One remaining challenge is determining which cookies, and localStorage items need to be set.

Originally I relied on a combination of trial and error – inspecting storage, debugging minified scripts in DevTools and then testing in DevTools and WebPageTest – to work this out.

But one day, while lying awake in the early hours, I realised there might be an easier way to determine what's needed, and so now my process starts like this:

- Open a guest mode window in Chrome

- Open DevTools

- Load the site so the cookie banner is visible

- In DevTools Network panel, switch to _Offline

- In the Application panel, Clear site data (it's under the Storage section), and remember to check including third-party cookies

- Consent to the cookies via the cookie banner

- Inspect Local Storage, and Cookies to see what items have been set

- Take an informed guess at which of the cookies and localStorage entries set are for the consent banner

- Write a script and test it

It's not a foolproof method, but it's certainly helped me get a head start with many sites I analyse.

Some sites make a network request as part of the consent process, and this fails in offline mode so not all the cookies and localStorage items get set – The Guardian is one site that throws up this issue.

Wrapping Up

Although I've concentrated on bypassing consent banners, testing with them in place is still important as it helps to understand our visitors' initial experience.

After all, if our visitors have a bad initial experience they may abandon without even interacting with the consent banner.

One thing I've noticed across multiple sites is how late many consent banners load – sometimes banner's are delayed because they depend on an external script loading, other times they wait for events such as DOMContentLoaded before being shown.

I'm not sure whether the aim should be to display the consent banner as soon as possible, or whether displaying content and then covering it with a banner is OK, and I couldn't find any research that helped clarify the issue.

Tests that display a consent banner only provide a partial view – particularly when testing is done from countries that require opt-in – so bypassing the banner helps us to build a more complete view of performance within testing and monitoring processes.

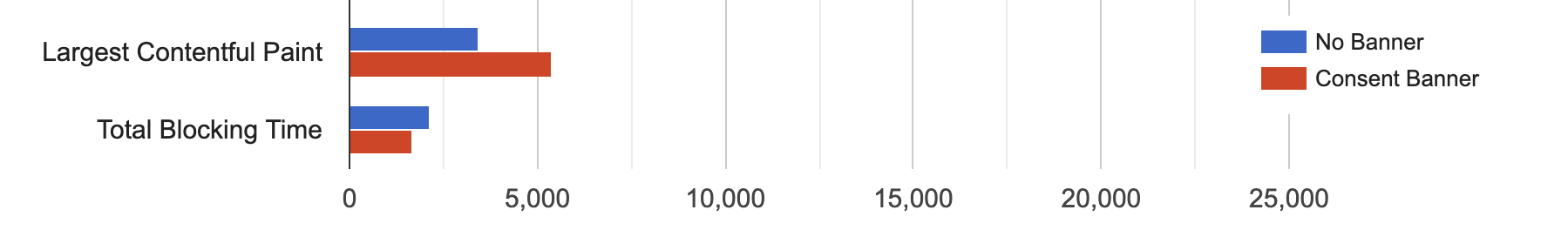

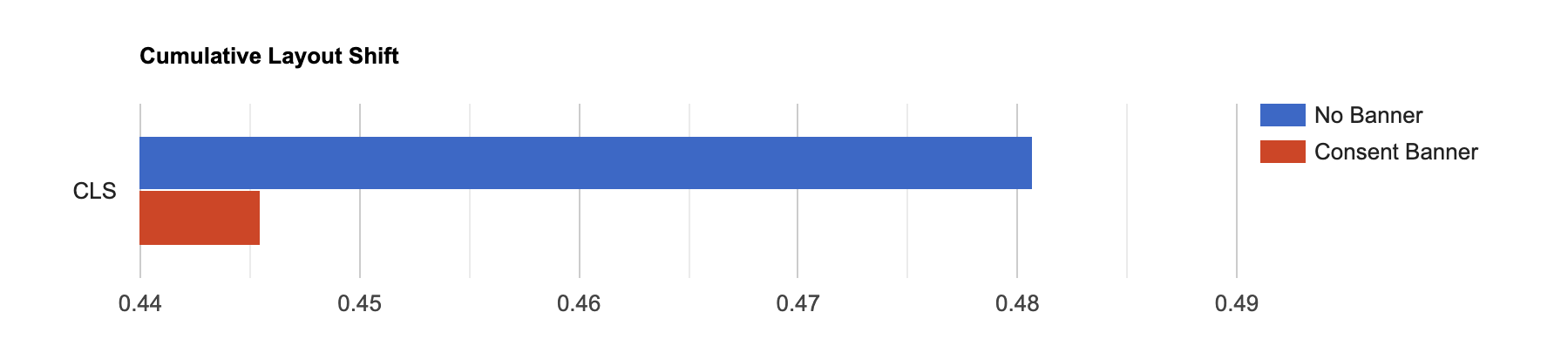

Revisiting the page from Currys when consent has been given; the Largest Contentful Paint gets faster, there's not much difference in Total Blocking Time, but the tags that execute introduce several Layout Shifts.

The full comparison of the Currys page with and without the Consent Banner is available on WebPageTest.

Although I've focused on Lighthouse and WebPageTest, the techniques for determining what cookies (and localStorage items) are needed to bypass consent banners should also work with other tools – many support setting headers and some, DebugBear for example, support injecting scripts.

Further Reading

Measuring Performance behind consent popups, Simon Hearne, May 2020

Publisher Ad Audits for Lighthouse

Ruthlessly Eliminating Layout Shift on netlify.com, Zach Leatherman, Nov 2020

WebPageTest Cookie Consent Scripts

]]>A client had asked me to look at the speed of their sites for countries in South East Asia, and South America.

The sites weren’t as fast as I thought they should be but then they weren’t horrendously slow either but, something that troubled me was how long they took to start displaying content.

I was puzzled…

When I examined the waterfall in WebPageTest I could see the hero image being downloaded at around 1 second but yet nothing appeared on the screen for 3.5 seconds!

I switched to DevTools and sure enough saw the same behaviour.

Profiling the page showed that even though multiple images were being downloaded quickly, the browser wasn’t even attempting to render them for several seconds.

Hunting through the source I found this snippet (I’ve unminified it to make it easier to read)

<script>

// pre-hiding snippet for Adobe Target with asynchronous Launch deployment

(function(g,b,d,f) {

(function(a,c,d) {

if(a) {

var e = b.createElement("style");

e.id = c;

e.innerHTML = d;

a.appendChild(e)

}

})(b.getElementsByTagName("head")[0], "at-body-style", d);

setTimeout(function() {

var a=b.getElementsByTagName("head")[0];

if(a) {

var c = b.getElementById("at-body-style");

c && a.removeChild(c)

}

},f)

})(window, document, "body {opacity: 0 !important}", 3E3);

</script>

The snippet does two things:

- Injects a style element that hides the

bodyof the document by setting it’sopacityto0 - Adds a function that gets called after 3 seconds to remove to the style element. This is a fallback in incase the Adobe Target script fails or takes a longer than 3 seconds to reach the point where it would remove the style element.

Google Optimize, Visual Web Optimizer (VWO) and probably others adopt a similar approach.

Urgh…

Impact of Anti-Flicker Snippets

Proponents argue that we need anti-flicker snippets because the potential of visitors seeing the page change as experiments are executed is a poor experience and visitors knowing they are part of an experiment can influence the results a.k.a The Hawthorne Effect.

I’ve not found any studies that validate this argument, but it may have merit as one of the Core Web Vitals, Cumulative Layout Shift, aims to measure how much elements move around during a page’s lifetime. And the more they move the worse the visitor’s experience is presumed to be.

As a counterpoint lets examine the experience that anti-flicker snippets deliver.

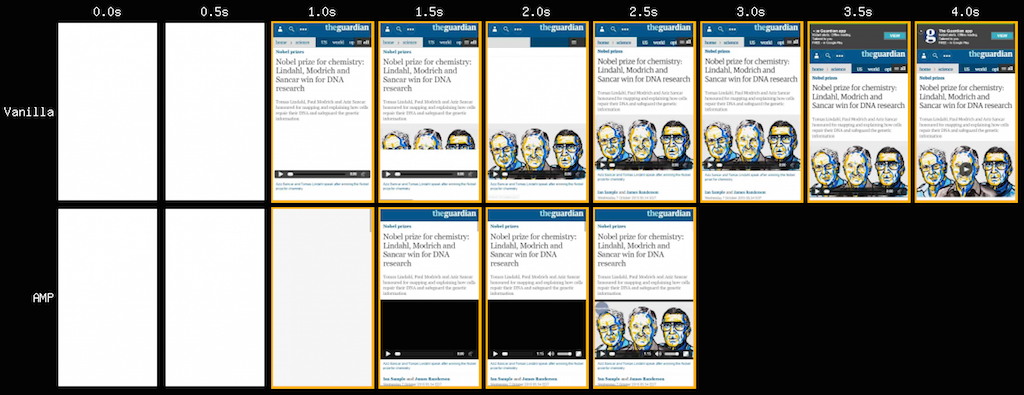

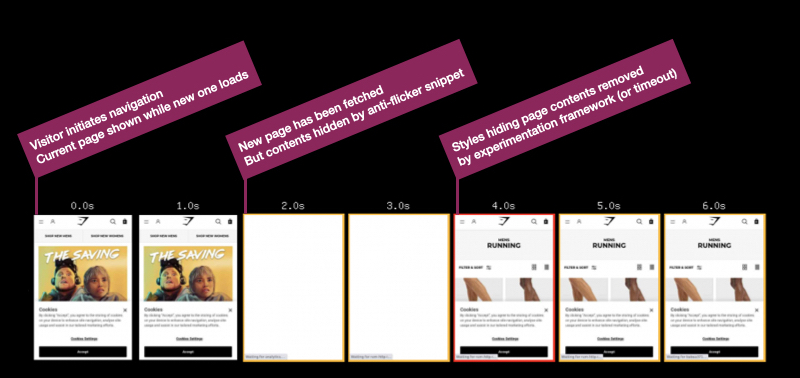

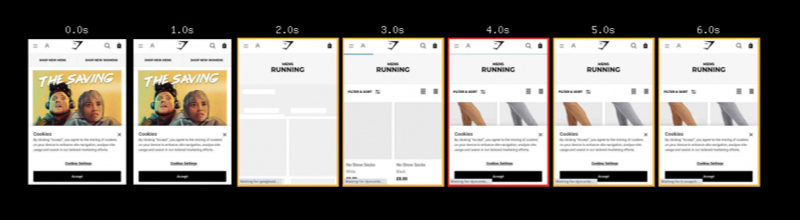

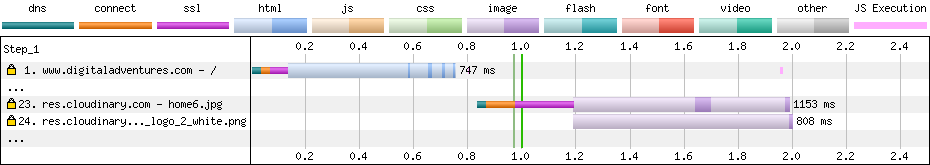

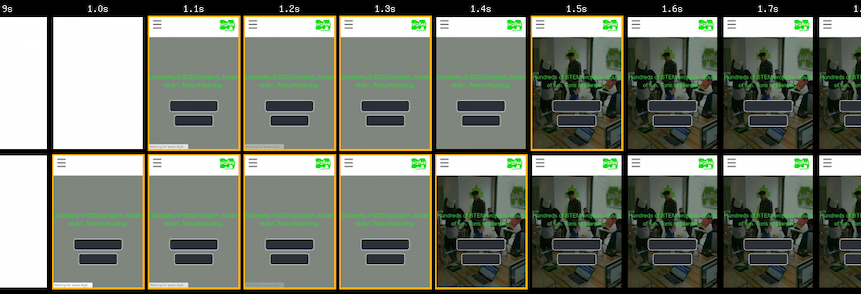

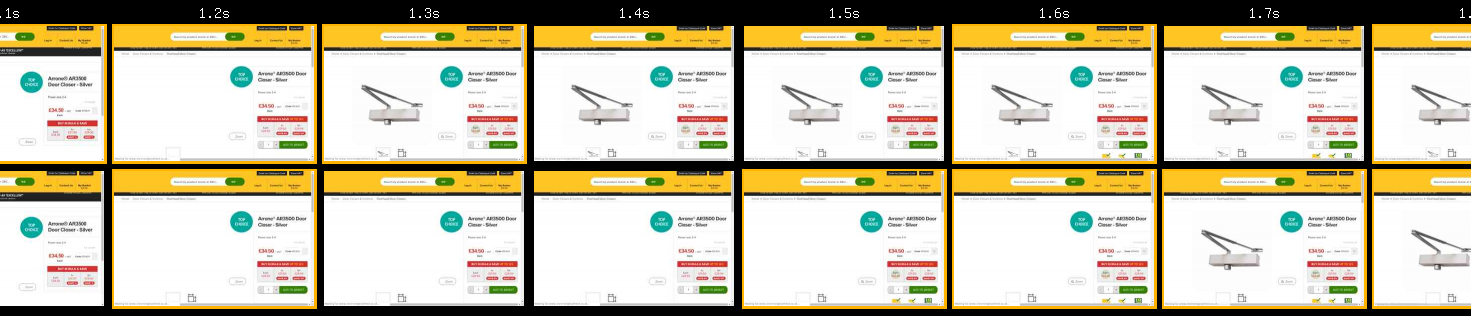

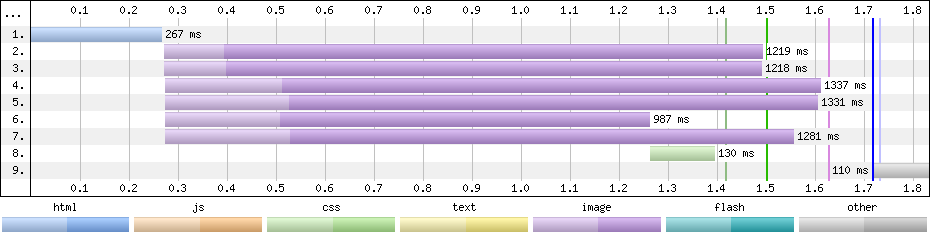

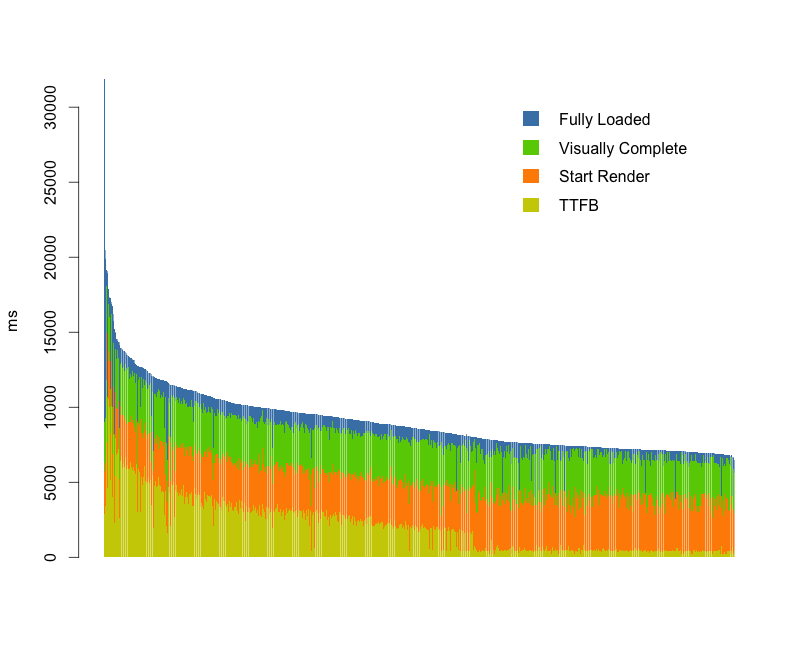

Here’s a filmstrip from WebPageTest that simulates a visitor navigating from Gymshark’s home page to a category page.

Gymshark – navigating from home to category page – London, Chrome, Cable

There are some blank frames in the middle of the filmstrip where the anti-flicker snippet hides the page for a few seconds (it’s actually 1.7s if you use the link above to view the test result)

By default Google Optimize uses a timeout of 4 seconds so in this case we can determine that the experimentation script completed before the timeout.

Compare this to a test where the anti-flicker snippet has been removed (using a Cloudflare Worker) and we can see the page renders progressively so at least in this case hiding the page doesn’t add to the visitors experience.

The blank frames also indicate to visitors that they may be part of an experiment, whether they realise this or not they may be aware the experience is different compared to other sites.

When the experimentation script doesn’t finish execution before the timeout expires then the visitor will get the ‘worst of both worlds’ – they’ll see a blank screen for a long time and then potentially see the effect of the experiment as it executes.

This might be because a visitor has a slower device or network connection, or the experimentation script being large and so taking too long to fetch and execute, or because other scripts in the page are delaying the experimentation scripts.

What we don’t know is how long visitors are staring at a blank screen!

Measuring the Anti-Flicker Snippet

Ideally, the experimentation frameworks would communicate when key events occur perhaps by firing events, posting messages or creating User Timing marks and measures.

But in common with many other 3rd-party tags they seem reluctant to do this, so we have to create our own methods for measuring them.

All three examples below use the MutationObserver API to track when either the class that hides the document, or the style element with the relevant styles in is removed (different products adopt slightly different approaches to hiding the page).

Each example sets a User Timing mark, named anti-flicker-end, when the anti-flicker styles are removed.

How long the page is hidden could be measured by adding a start mark to the snippet that hides the page and then using performance.measure to calculate the elapsed duration.

Some RUM products can be configured to collect the marks and measures created, others rely on explicitly calling their API (as do analytics products).

In my testing so far I’ve found the measurement scripts almost match the blank periods in WebPageTest filmstrips but they often measure a couple hundred milliseconds before the blank period actually ends. This isn’t surprising as once the styles have been updated, the browser still has to layout and render the page. In the future perhaps in the Element Timing might provide a more accurate measurement.

Although I’m working towards deploying these with a client, we’ve not deployed it yet, so treat them as prototypes and test them in your own environment!

Also I spend more time reading other people’s code than writing my own, so feel free to suggest ways to improve the measurement snippets, checks for Mutation Observer support could be added for example.

I’ve created a GitHub repository to track the scripts (I plan on adding more for other 3rd-parties) and pull requests are very welcome!

- Google Optimize

Google Optimize adds an async-hide class to the html element so the script detects when this class is removed.

const callback = function(mutationsList, observer) {

// Use traditional 'for loops' for IE 11

for(const mutation of mutationsList) {

if(!mutation.target.classList.contains('async-hide') && mutation.attributeName === 'class' && mutation.oldValue.includes('async-hide')) {

performance.mark('anti-flicker-end');

observer.remove();

break;

}

}

};

const observer = new MutationObserver(callback);

const node = document.getElementsByTagName('html')[0];

observer.observe(node, { attributes: true, attributeOldValue: true });

- Adobe Target

Adobe Target adds a style element with an id of at-body-style and the script below detects when this element is removed

const callback = function(mutationsList, observer) {

// Use traditional 'for loops' for IE 11

for(const mutation of mutationsList) {

for(const node of mutation.removedNodes) {

if(node.nodeName === 'STYLE' && node.id === 'at-body-style') {

performance.mark('anti-flicker-end');

observer.disconnect();

break;

}

}

}

};

const observer = new MutationObserver(callback);

const node = document.getElementsByTagName('head')[0];

observer.observe(node, { childList: true });

- Visual Web Optimizer

VWO adds a style element with an id of _vis_opt_path_hides and as with Adobe Target the script detects when this element is removed.

During testing I also observed VWO add other temporary styles to hide other page elements too.

const callback = function(mutationsList, observer) {

// Use traditional 'for loops' for IE 11

for(const mutation of mutationsList) {

for(const node of mutation.removedNodes) {

if(node.nodeName === 'STYLE' && node.id === '_vis_opt_path_hides') {

performance.mark('anti-flicker-end');

observer.disconnect();

break;

}

}

}

};

const observer = new MutationObserver(callback);

const node = document.getElementsByTagName('head')[0];

observer.observe(node, { childList: true });

Anti-Flicker Snippets are a Symptom of a Larger Issue

Once we’ve started collecting data on how long the page is hidden for, we can experiment with reducing the timeout, or even removing the anti-flicker snippet completely.

After all, these are experimentation tools so we should experiment with how their implementation affects visitors’ experience and behaviour!

But, fundamentally, the anti-flicker snippet is a symptom of a larger issue, and that issue is that testing tools finish their execution too late.

Revisiting the first Gymshark test and zooming into the filmstrip at 100ms frame interval we can see the page was revealed at 3.2s.

Gymshark – navigating from home to category page – London, Chrome, Cable

Or to view it another way… Google Optimize finishes execution at around 3.2s… and as Largest Contentful Paint needs to happen within 2.5s to be considered ‘good’, this suggests the delays caused by experimentation tools may be testing our visitors’ patience.

(In Gymshark’s case there are some other factors that further contribute to the delay too)

Shrinking the size of the testing script will help to reduce how long it takes to fetch and execute, and I mentioned some factors that can help with this in Reducing the Site-Speed Impact of Third-Party Tags.

The size of tags for testing services often depends on the number of experiments included, number of visitor cohorts, page URLs, sites etc. and reducing these can reduce both the download size and the time it takes to execute the script in the browser.

Out of data experiments or A/A tests that are being used as workarounds for CMS issues or development backlogs, and experiments for different sites (staging and live etc.) in the same tag are some of the aspects I look for first.

Recently I came across an example where base64 encoded images were being included in the testing scripts, making them huge, so avoid that too!

But there’s also another challenge…

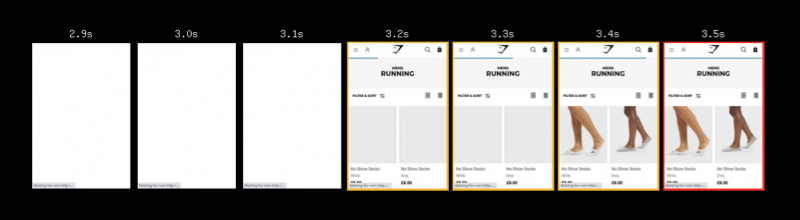

Vendors recommend anti-flicker snippets because their testing scripts are loaded asynchronously – so the browser can continue building the page while the script is being fetched – and browsers, particularly Chrome, deprioritise async scripts.

In Chrome, this deprioritisation means the fetch (even from cache) of asynchronous scripts in the head is delayed into the second-phase of the page load.

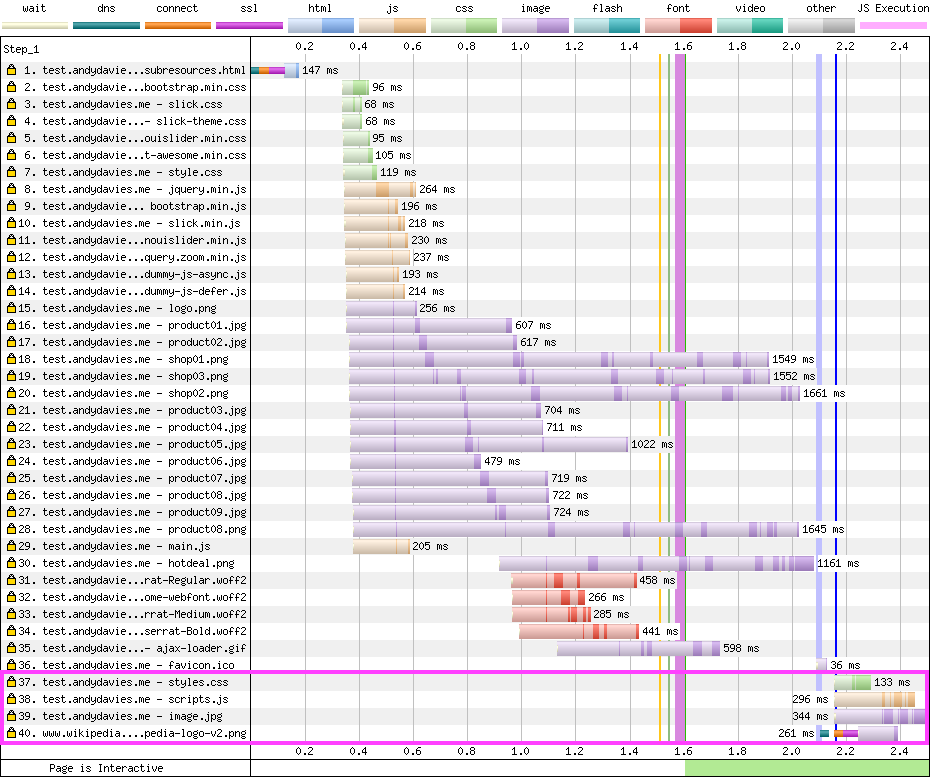

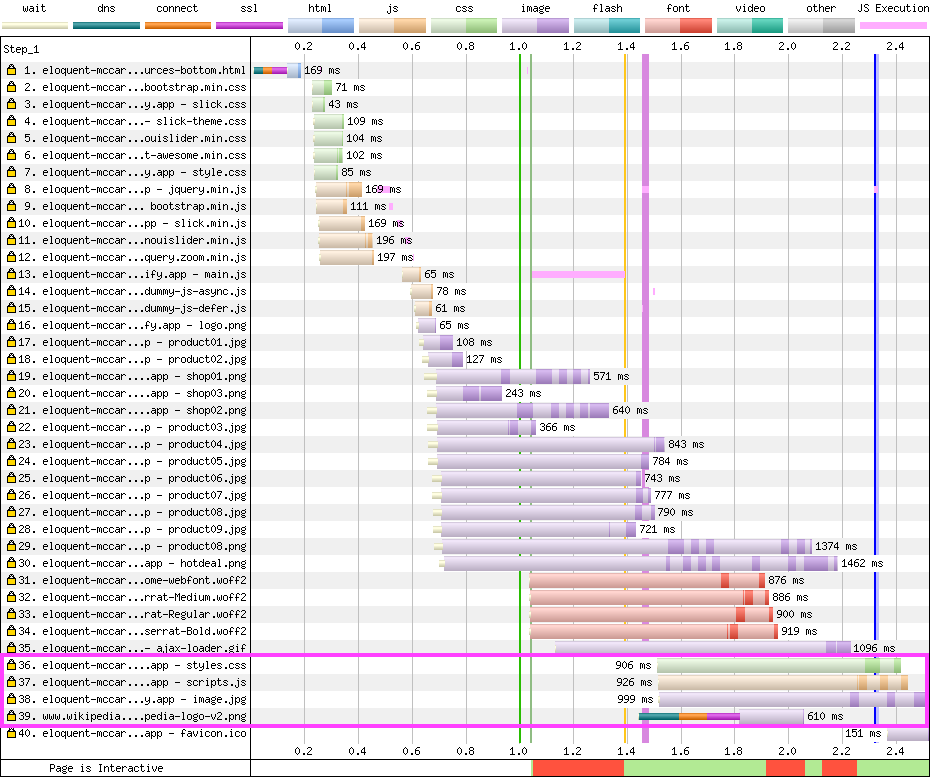

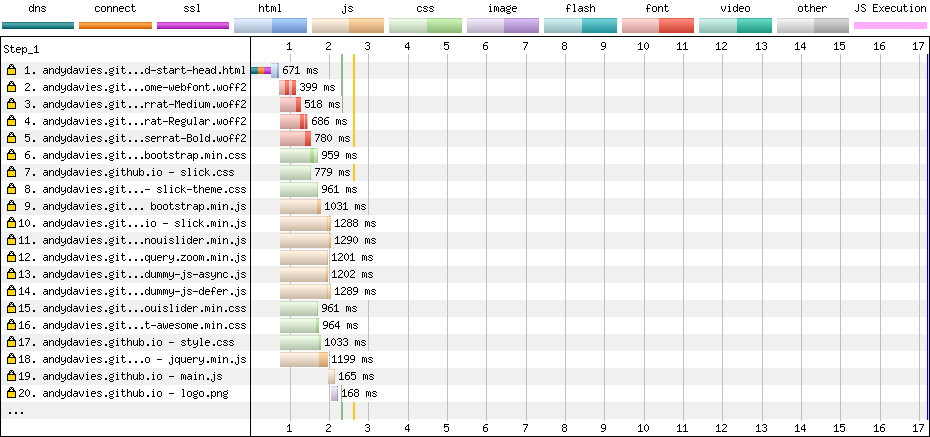

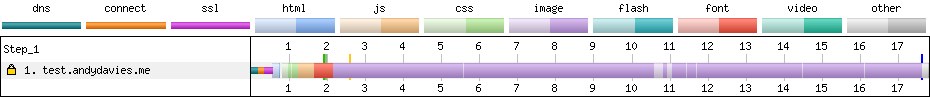

An example of this can be seen in the waterfall below, where request 14 has been deprioritised because it’s async.

Prioritisation Test Page – Dulles, Chrome, Cable

So not only are the scripts finishing too late they’re also starting too late!

To compound the delay some testing scripts are loaded via a Tag Manager, and as Simo Ahava demonstrated with Google Optimize this increases the delay even further.

The alternative, at least for client-side testing, is to adopt a blocking script approach that products like Optimizely, and Maxymiser use.

But then we face the issue that the browser must wait for the testing script to be fetched and executed before it can continue building the page, and if the script host is inaccessible that can be a long wait.

We’re stuck between ‘a rock and a hard place’!

There is a third option… and that’s to create the page variants server-side before the HTML is even received by the browser.

Unfortunately too few publishing and ecommerce platforms have built-in support for experimentation so implementing this isn’t as easy as the current client-side options.

Some Content Delivery Networks (CDNs) already have the capability to provide test variants from their nodes, and as Edge Computing offerings from Akamai, Cloudflare and Fastly et al. mature I expect to see AB / MV Testing vendors offer ‘experimentation at the edge’ as a capability.

Several of the testing vendors currently support server-side testing but they still depend on sites to do the heavy lifting of implementing variants themselves.

Closing Thoughts

The default timeout values on anti-flicker snippets are set way too high (3 seconds plus) especially when we consider the limits that are placed on metrics like Largest Contentful Paint.

If you’re using anti-flicker snippets as part of your experimentation toolset, you should measure how long visitors are being shown a blank screen, with the aim of reducing the timeout values or even removing the anti-flicker snippet completely.

In his post on measuring the impact of Google Optimize’s anti-flicker snippet, Simo highlights some of the events that Google Optimize exposes during its lifecycle and these can be used as hooks for getting a more complete picture of when tests are running and their impact on your visitors experience.

If your vendor doesn’t expose timings or events for key milestones, point them at Google Optimize as a competitor and ask them to. It really is unacceptable that 3rd-parties tags don't already expose this data.

As ever with client-side scripts reducing their size will reduce their impact on site speed so monitor their size, and clean them up regularly.

Fundamentally we need to move this work out of the browser so track what your vendors are doing to support server-side experiments, particularly when it comes to integration with the CDNs Edge Compute platforms as this is going to be the most practical way for many sites to implement this.

Further Reading

Exploring Site Speed Optimisations With WebPageTest and Cloudflare Workers, Andy Davies, Sep 2020

Google Optimize Anti-flicker Snippet Delay Test, Simo Ahava, May 2020

JavaScript Loading Priorities in Chrome, Addy Osmani, Feb 2019

Reducing the Site-Speed Impact of Third-Party Tags, Andy Davies, Oct 2020

Simple Way To Measure A/B Test Flicker Impact, Simo Ahava, May 2020

Timing Snippets for 3rd-Party Tags

]]>As performance advocates we’d champion the idea that improving performance adds value, sometimes the value is tangible – increased revenue for a retailer, or increased page views for a publisher – other times it may be less tangible – improvements in brand perception, or visitor satisfaction for example.

But as important and valuable we might believe speed to be, we need to persuade other stakeholders to prioritise and invest in performance, and for that we need to be able to demonstrate the benefit of speed improvements versus their cost, or at least how slow speeds have a detrimental effect on the factors people care about – visitor behaviour, revenue etc.

“Isn’t that case already made” you might ask?

What about all the case studies that Tammy Everts and Tim Kadlec curate on WPOStats?

Case studies are a great source of inspiration, but it’s not unusual to hear objections of "our proposition is different, our site is different, or our visitors are different" and often there’s truth to these objections.

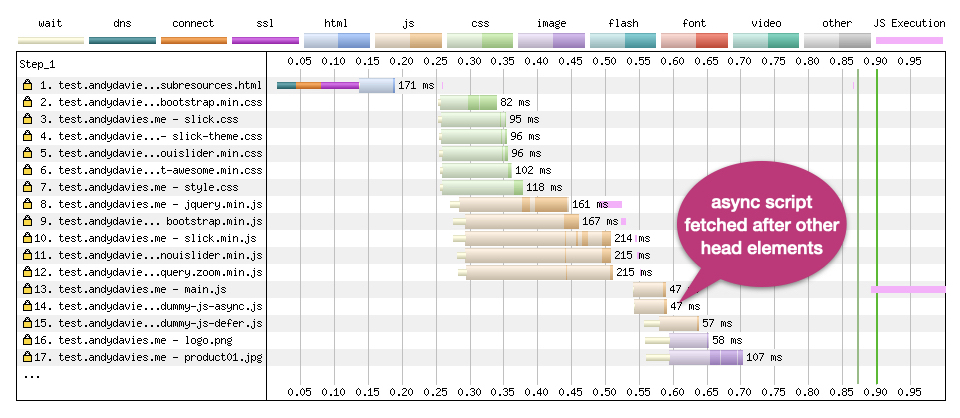

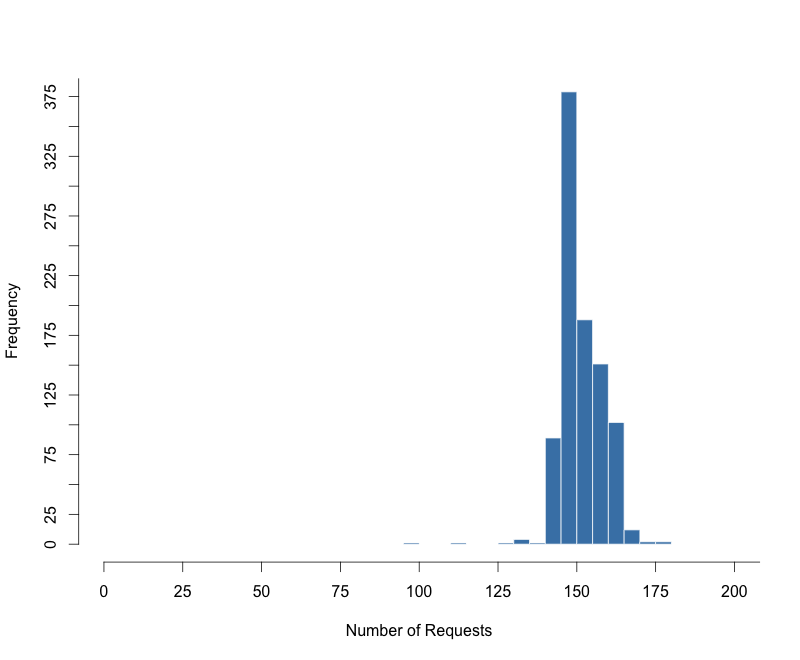

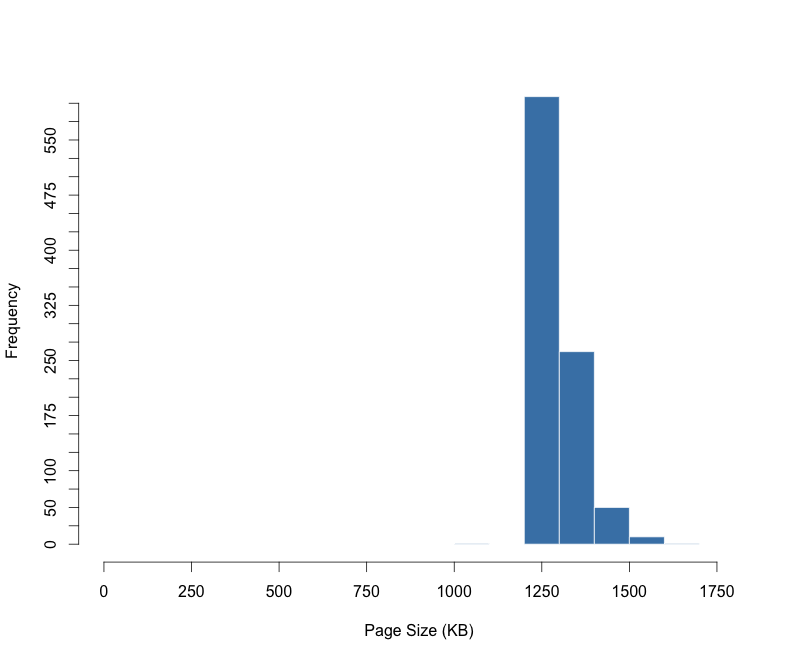

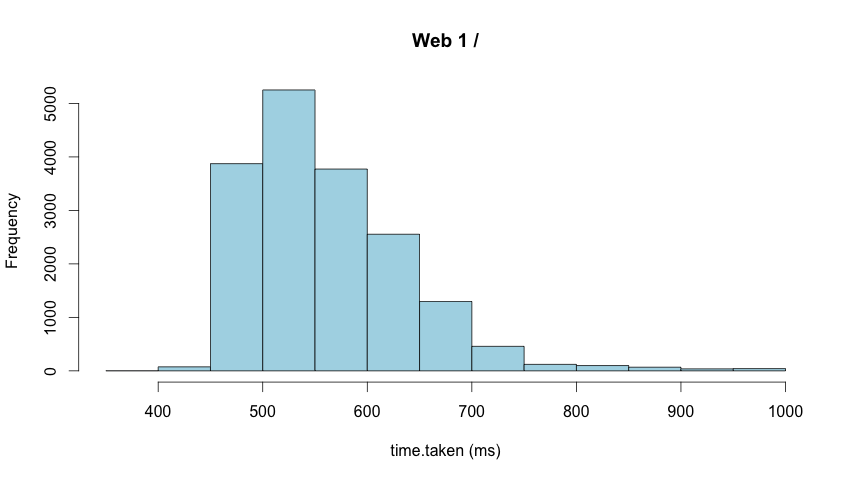

The chart below shows how conversion rates vary by average load time across a session for three UK retailers.

(Ideally I’d like a chart that doesn’t rely on averages of page load times but sometimes you’ve got to work with the data you have)

Although the trend for all three retailers is similar – visitors with faster experience are more likely to convert – the rate of change is different for each retailer, and so the value of improved performance will also vary.

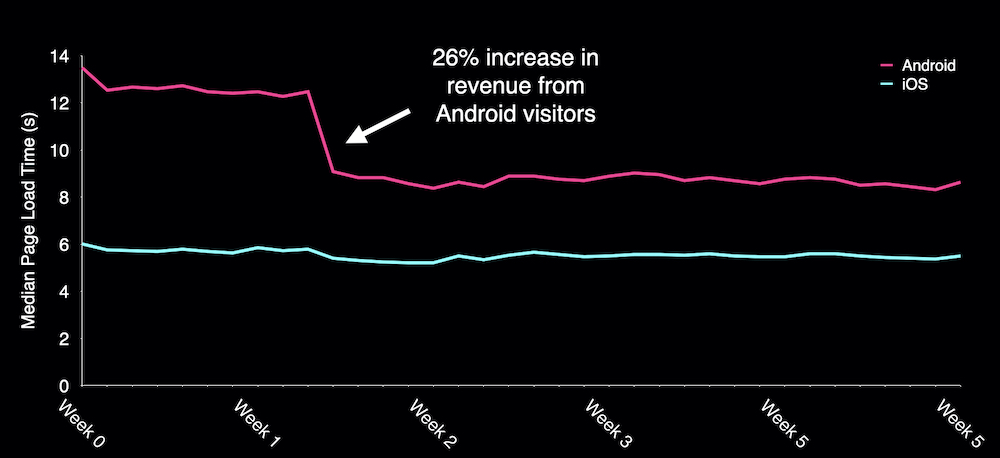

As examples of how this value varies, one retailer I worked with saw a 5% increase in revenue from a 150ms improvement in iOS start render times, another increased Android revenue by 26% when they cut 4 seconds from Android load times and a third saw conversion rates improve for visitors using slower devices when they stopped lazy-loading above the fold images.

Other clients have had visitors that seemed very tolerant of slower experiences calling into the question the principle that being faster makes a difference for all sites, and what value investing in performance would deliver.

If case studies aren’t persuasive enough or maybe not even applicable for some sites, how do we help to establish the value of speed?

Identifying the Impact of Performance Improvements

Determining what impact a performance improvement had on business metrics can be challenging as the data is often split across different products, and a change in behaviour may only be visible for a subset of visitors, perhaps those on slower devices, or with less reliable connections.

This difficulty can lead us to depend on what Sophie Alpert describes as ‘Proxy Metrics’ such as file size, number of requests, or scores from tools like Lighthouse – if our page size or number of requests decreases, or our Lighthouse score increases then we’ve probably made a positive difference.

Haven’t we?

Relying on proxy metrics brings a danger that we celebrate improvements without knowing whether we actually made a difference to our business outcomes, and the risk that changes in business metrics are credited to other sources, or worse still, we remove something that actually delivers more value than it costs.

In Designing for Performance, Lara Hogan advocates the need for organisational cultures that value site speed at the highest levels rather than relying on Performance Cops and Janitors.

Linking site speed to the metrics that matter to senior stakeholders is a key part of that but as a performance industry / community I think we probably rely on an ad-hoc approach to making that link.

Relationship Between Site Speed and Business Outcomes

In June 2017, I had a bit of a realisation…

At the time I was employed by a web performance monitoring company, and one of our ongoing debates was about what data our Real User Monitoring (RUM) product should collect.

Simon Hearne and I worked with clients to identify and implement performance improvements, and then post implementation we were trying to quantify the value of those improvements.

As we identified gaps between the data our RUM product collected and the data we wanted, we would ask for new data points to be added but kept running into resistance from our engineering team who were often skeptical about the value of the new data.

We were in a ‘chicken and egg’ situation – our engineering team didn’t want to collect the data unless we could prove it had value, but Simon and I couldn’t establish whether it had value until we started collecting it.

We were missing a framework that might help us make these decisions, one that could help everyone understand what data we could collect, and what questions that data might help answer.

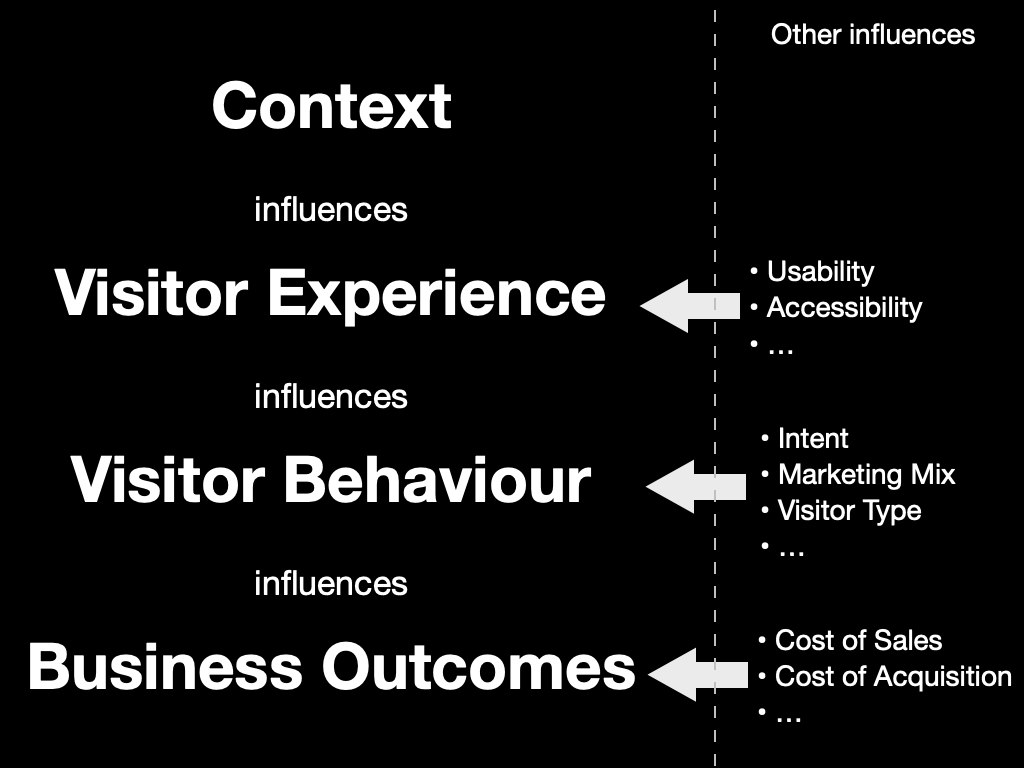

At some point while I was thinking about our challenge, I created a deck with a slide similar to this one:

Concepts such as “how we build pages influence our visitors’ experience”, “the experience visitors get influences how they behave”, and “how visitors behave influences our sites’ success” are commonplace in web performance.

After all, these are concepts that underpin many of the case studies on WPOStats.

But… I’m not sure I’d ever seen them written down as an end-to-end model before.

And writing them down on one slide helped me realise what my mental model of web performance actually looked like.

It’s also become a lens through which I view one of Tammy’s questions in Time is Money – “How Can We Better Understand the Intersection Between Performance, User Experience, and Business Metrics?”

Other slides in the deck explained those categories, and what metrics might be included within them:

- Context

What a page is made from, how those resources are delivered, the device it’s viewed on and the networks it was transmitted over are all fundamental to how fast and smooth a visitor’s experience is.

Some of those factors – browser, device, and network etc. – have a crucial impact on how long our scripts take to execute, how soon our resources load etc., but as they are all outside our control, they’re really constraints we need to design for.

Other factors such as the resources we use, whether they’re optimised, how they’re delivered and composed into a page also have a huge effect on a visitor’s experience and this second set of factors is largely within our control, after all these are the things we change when we’re improving site speed.

- Visitor Experience

From a performance perspective visitor experience is synonymous with speed – when did a page start to load, when did content start to become visible, how long did key images take to appear, when could someone interact with the page, were their interactions responsive and smooth etc.

We’ve plenty of metrics to choose from, some frustrating gaps and the ability to synthesise our own via APIs such as User Timing too.

There are other factors we might want to consider under the experience banner too – are images appropriately sized, does the product image fit within the viewport or does the visitor need to scroll to see it, what script errors occur etc.

- Visitor Behaviour

How visitors behave provides signals as to whether a site is delivering a good or bad experience.

At a macro level, a visitor buying the contents of their shopping basket is seen as a positive signal, whereas someone navigating away, or closing the tab before the page has even loaded would be a negative one.

Then at the micro level there are behaviours such as whether a visitor reloads the page, rotates their device, or perhaps zooms in, how long they wait before interacting, how much they interact etc.

There are also other, non-performance factors that influence visitors behaviour – their intent, the marketing mix, social demographics factors – that we may or may not want to include when we’re considering behaviour.

- Business Outcomes

Individual user behaviour can be aggregated into metrics we use to run our businesses – conversion rates, bounce rates, average order values, customer lifetime value, cost of acquisition etc. – and ultimately revenue, costs and profit.

Limitations of the Model

“All models are wrong; some models are useful”, George E. P. Box

Every model has limitations, and while site speed might be our focus, it isn’t the only driver of a site's success.

- A visitor’s experience is much more than just how fast it is – content, visual design, usability, accessibility, privacy and more all contribute to the experience.

- The type of visitor, their intent, the marketing mix (product, price, promotion etc.) and more influence how people behave.

- Factors such as cost of acquisition, product margin, returns etc. affect the success of our business

Our challenge is identifying what role site speed played in influencing the outcomes.

I’ve also got outstanding questions about how design techniques that improve perception of performance, such as those Stephanie Walter covers in Mind over Matter: Optimize Performance Without Code fit into the model too.

There are also questions about whether it’s possible, desirable, and even acceptable to gather some of the data points we might want.

But…

Even with its limitations, I still find the model very useful as a tool to help communication and build understanding.

It helps facilitate discussions around the metrics we’re capturing (or could start capturing) and what those metrics actually represent.

It’s handy when making changes as we can discuss what effect we expect to see from a change – which metrics should move, in what direction, and how that might affect visitor behaviour.

I’ve also had some success tracking changes in how pages were constructed all the way to changes in business outcomes, particularly revenue but often the data on speed, visitor behaviour and business performance is stored in separate products and the gap between them can make analysis hard.

Bridging the Gap

If our thesis is that the speed of a visitor’s experience influences their behaviour then we need tools that allow us to capture, and analyse data on both visitors’ experience and their behaviour in the same place.

This is where the gaps in analytics and performance tools start to show:

- Analytics products tend to focus on visitor acquisition and behaviour but generally don’t capture speed data. Those that do collect speed data, only support limited metrics, have low sample rates and expose the data as averages.

- Real-User Monitoring (RUM) products tend to focus on speed, some capture a wider range of metrics and a few capture some data on visitor behaviour such as conversion, bounce and session length.

- Some Digital Experience Analytics products (Session Replay, Form Analytics etc) collect speed data alongside visitor behaviour but only one product exposes the cost of speed in their product.

- Performance Analysis tools such as WebPageTest and Lighthouse give us a deeper view into page performance including construction and delivery but can’t capture data on either real-visitors’ experience or their behaviour.

There are limits to what data it’s possible, practical or acceptable to collect and store but ideally I’d also like data on how a page is constructed and delivered, along with some business data to be stored in the same place too.

Although it’s easy to think of all RUM products as comparable, some are more capable than others and a few RUM products have tried to close some of the gaps but there is still much to be done.

I track the features and capabilities of over thirty RUM products and too many of them focus on just monitoring how fast a site is, often only using a few aggregated metrics (DOMContentLoaded / Load).

Other products support perceived performance metrics such as paint and custom timings, and some include data on page composition too – number of DOM nodes, scripts etc.

Very few products capture data on visitor behaviour, some plot conversion or bounce rates alongside speed metrics, others build predictive models showing the value speed improvements could bring – reduced bounce rates, higher conversion rates, increased session lengths etc.

For filtering and segmentation products tend to focus on contextual dimensions such as browser make and version, operating system, ISP, device type, country etc., rather than behavioural dimensions such as whether a visitor converts or bounces etc.

This focus on technical dimensions and metrics rather than visitor behaviour means it can often be hard to answer the questions I often want to ask.

Questions such as, how does performance differ between visitors who convert, and those that don’t?

Or which visitors have slow experiences and why?

Or what areas of a site should we focus first on when improving performance?

And much more…

Ultimately I want to be able to segment based on a variety of factors including how visitors behave, the experience they have and how the pages they view are constructed.

I want to be able to highlight not just how speed impacts visitors’ behaviour and what it costs, but why some visitors have slower experiences and perhaps even what changes can be made to improve them.

And once we’ve made improvements I want to be able to link changes in behaviour, and gains in business metrics back to those performance improvements.

Closing Thoughts

It’s easy to get excited about new techniques for measuring and improving site speed but this focus on the technical side of performance can lead us to think of speed as a technical issue, rather than a business issue with technical roots.

As Harry Roberts said in his 2019 Performance.Now talk – “Our job isn’t to make the fastest site possible, it’s to help make the most effective site possible”

But to help make more effective sites we need tools that make it easier to understand how speed is influencing visitors' behaviour, easier to identify key areas where performance needs to improve and perhaps even recommend actions they can take to improve it.

We also need models that help link the way sites are constructed and delivered to the business outcomes, so that we understand how the changes affect visitors and allow for features that might make pages a bit larger, a little slower but improve engagement and deliver higher revenues.

My mental model is still a work in progress and I’m not wedded to it, so feel free to suggest alternatives, poke at its gaps or better still, suggest ways we can fill the gaps.

Ultimately, there are too many RUM products that just measure how fast or slow a visitors experience is, and are unable to link that experience to a site's success.

If we want to make the web faster we've got to close that gap.

Thanks

I started this post a couple of years ago while I was taking some time off after helping sell NCC Group's web performance business.

Since then I've talked to quite a few people about the ideas in it and I'm grateful to them for sharing their challenges and experience or giving me feedback.

Finally, Colin, Dave, Jeremy, Simon and Tim were kind enough to my read my draft post, spot my typos and poke at its weak points.

Further Reading

Designing for Performance, Lara Hogan

From Milliseconds to Millions: A Look at the Numbers Powering Web Performance, Harry Roberts

Metrics by Proxy, Sophie Alpert

Mind over Matter: Optimize Performance Without Code, Stephanie Walter

]]>As it’s hard to fit everything into a twenty minute talk, this post expands on the talk and includes some of the points I didn’t have time to cover.

From Analytics to Advertising, Reviews to Recommendations, and more, it’s common for sites to rely on Third-Party tags to provide some of their key features.

But there’s also a tension between the value tags bring and the privacy, security and speed costs they impose.

I’m focusing on speed but if you want to learn more about the other aspects, Laura Kalbag and Wolfe Christl often cover the privacy concerns, and Scott Helme sometimes covers the security issues.

What does a Tag Cost?

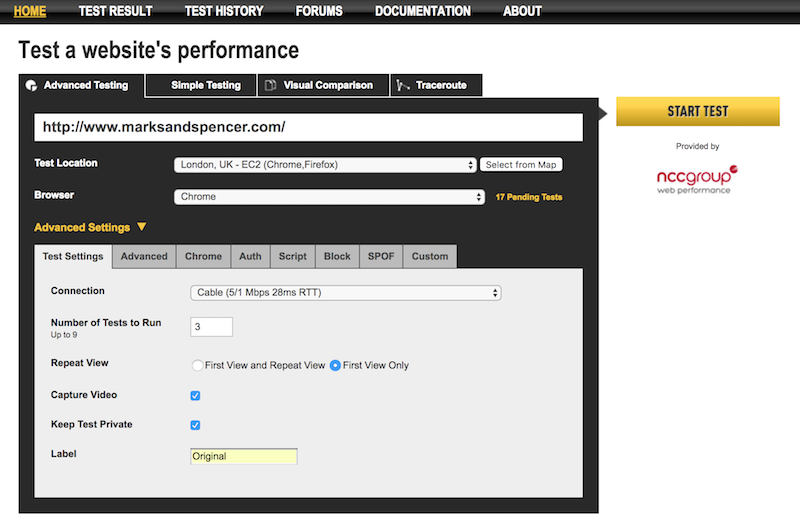

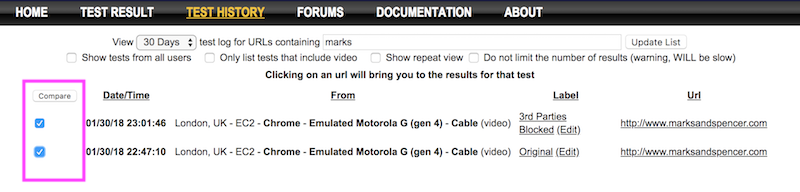

When I’m helping clients to improve the speed of their sites one of my first steps is to test the site with and without tags using WebPageTest (you can also use this approach to test the impact of individual tags).

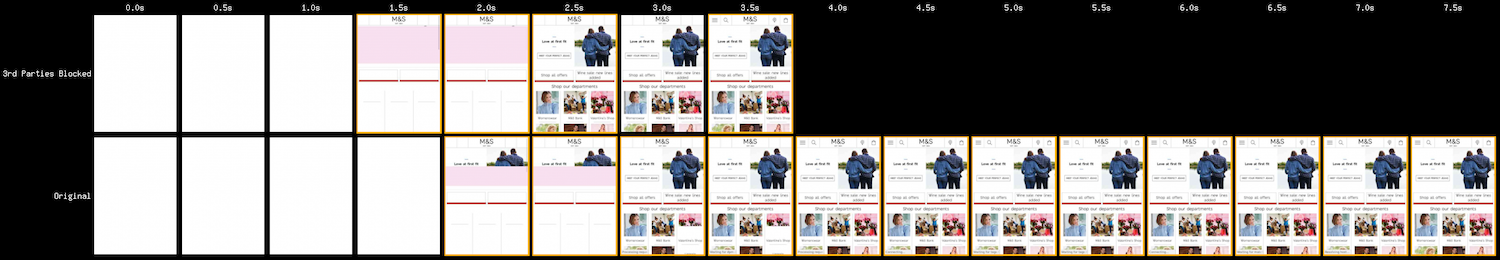

This gives me an indication of what gains might be made by optimising the implementation of tags.

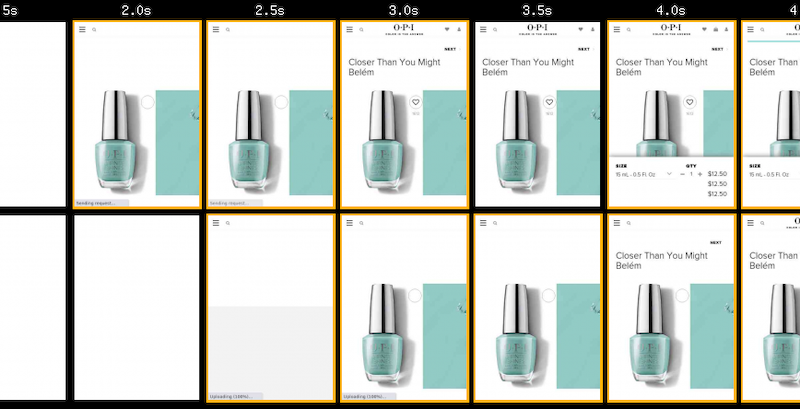

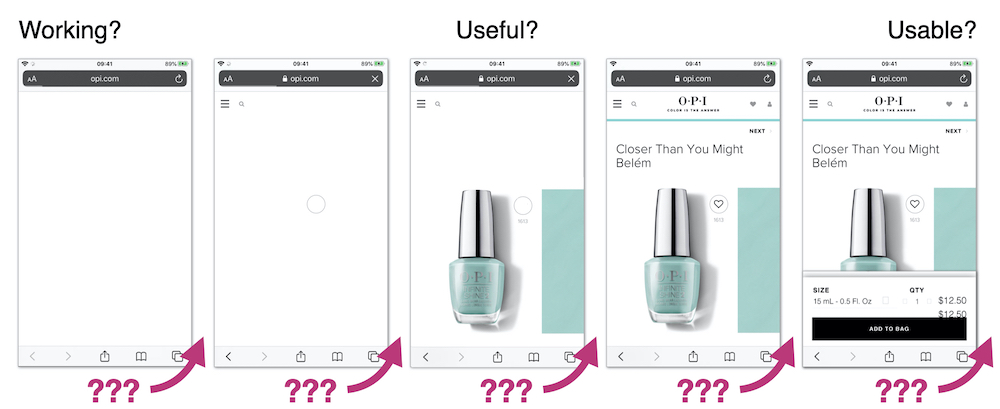

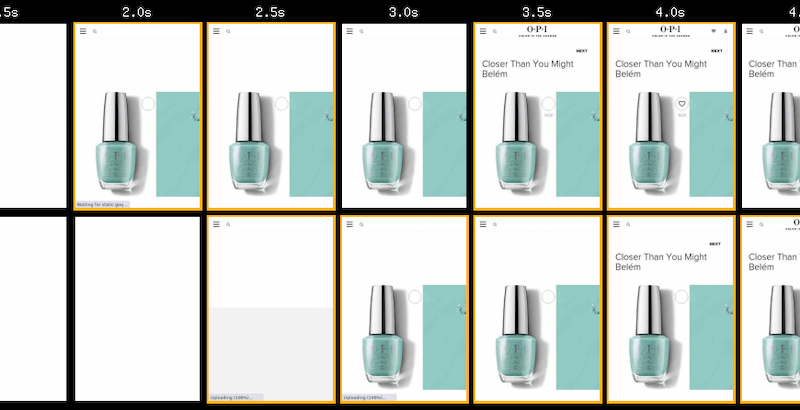

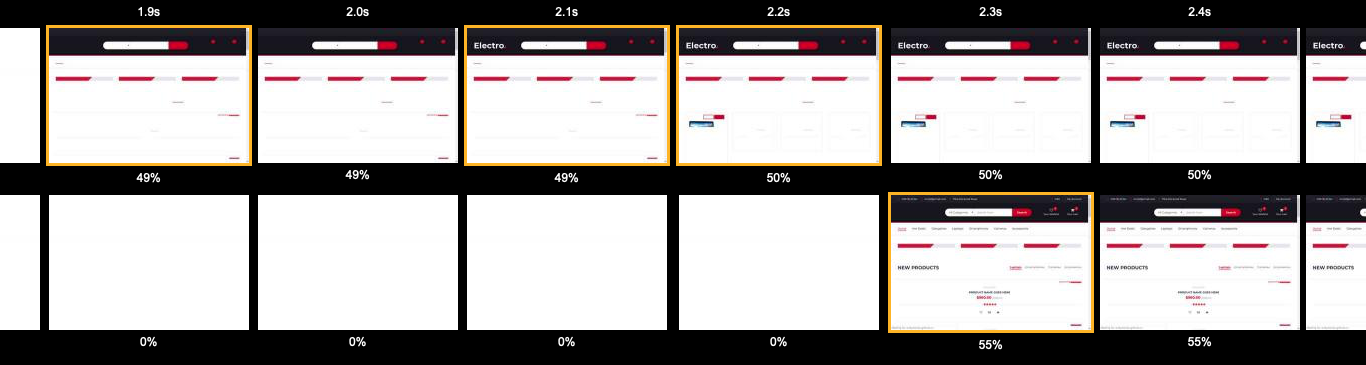

Using OPI, the nail varnish company as an example… when third-party tags are removed their pages get faster – on product pages the key image appears about a second sooner, and other content such as the heading text, and brand logo also appear sooner.

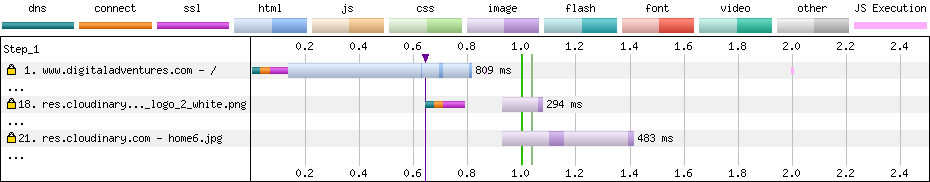

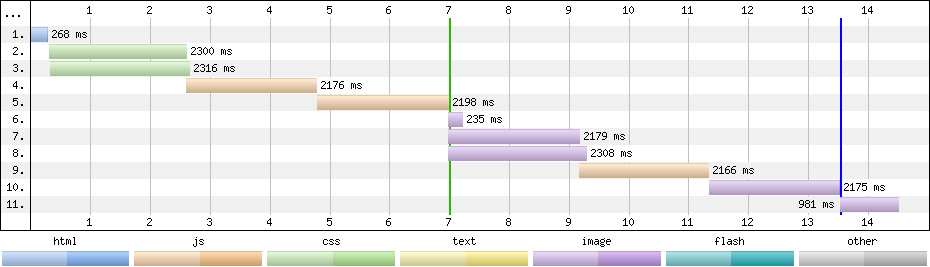

OPI with 3rd-Party Tags blocked (top), and as loaded normally (bottom)

OPI with 3rd-Party Tags blocked (top), and as loaded normally (bottom)

How Tags Impact Site-Speed

There are two ways tags impact site-speed – they compete for network bandwidth and processing time on visitors’ devices, and depending on how they’re implemented they can delay HTML parsing

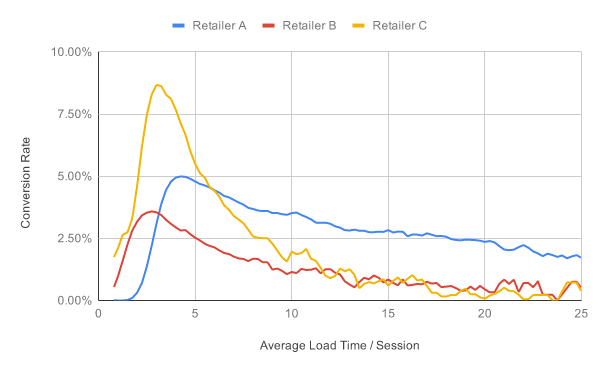

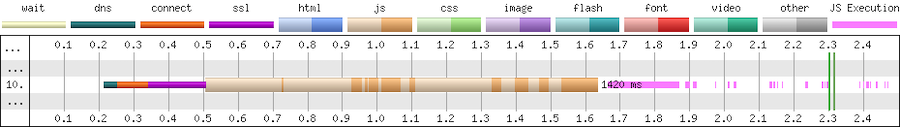

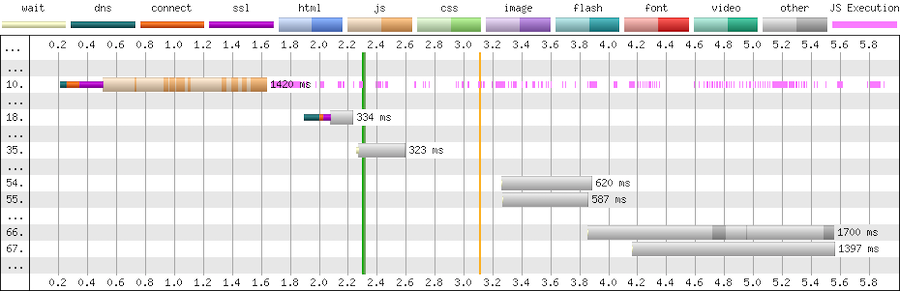

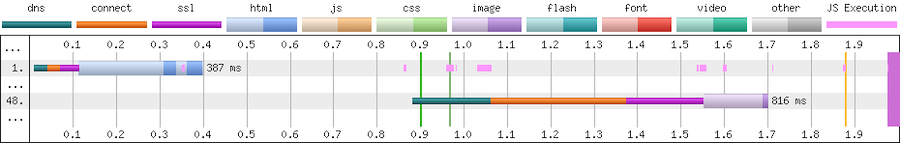

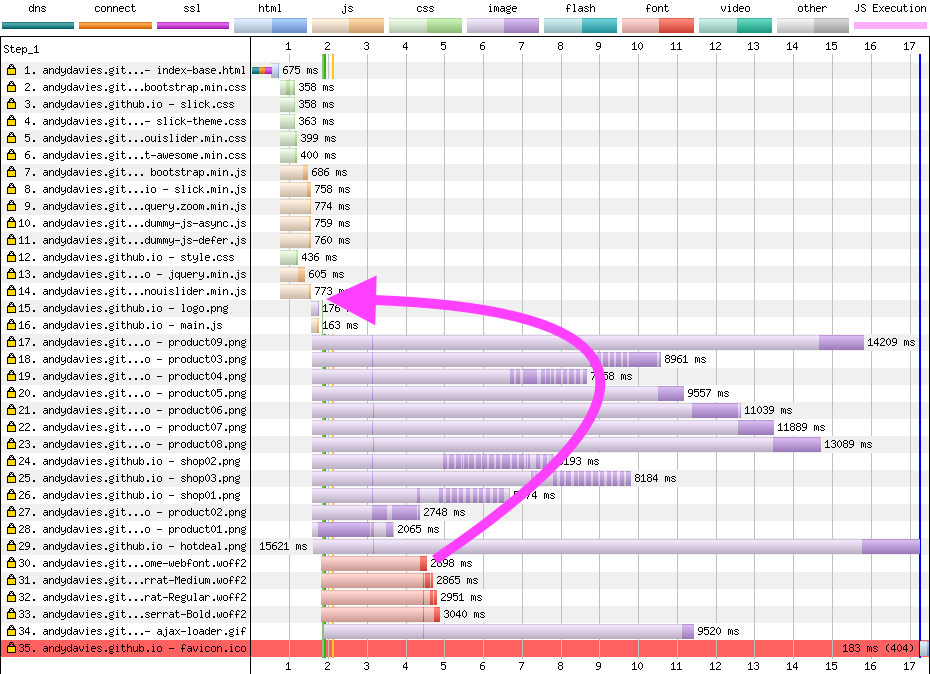

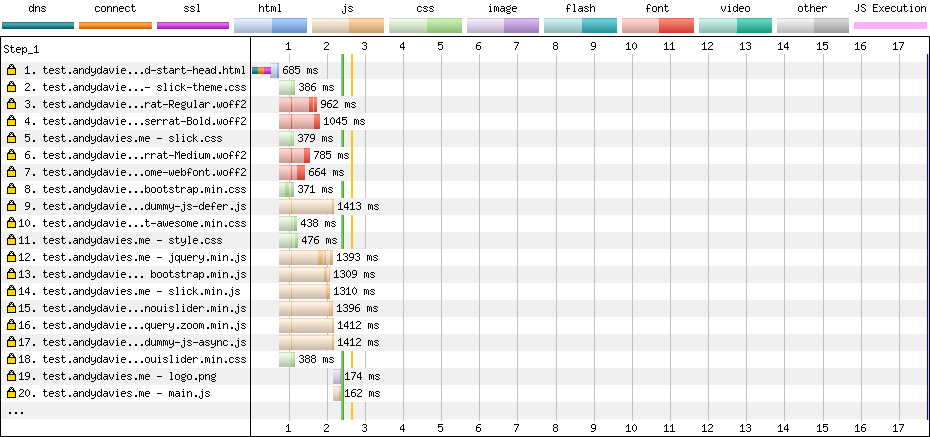

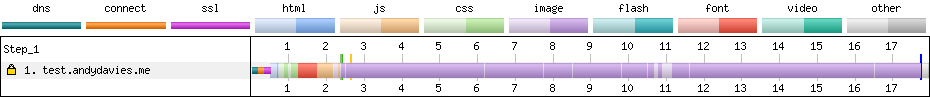

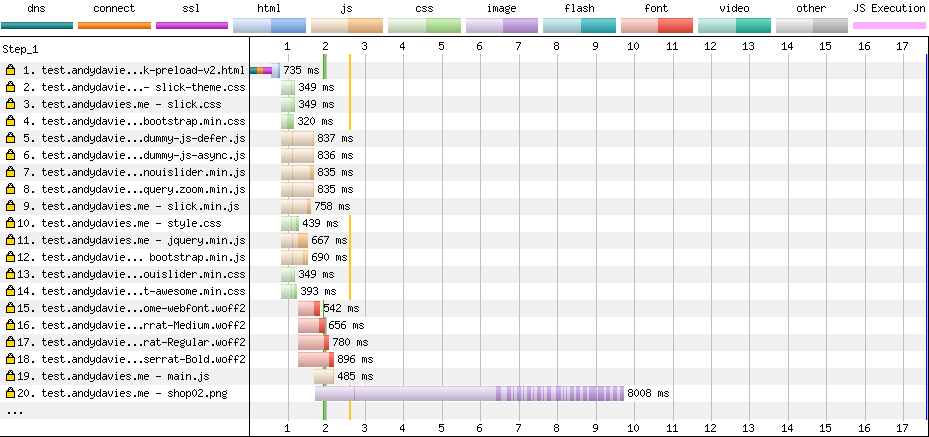

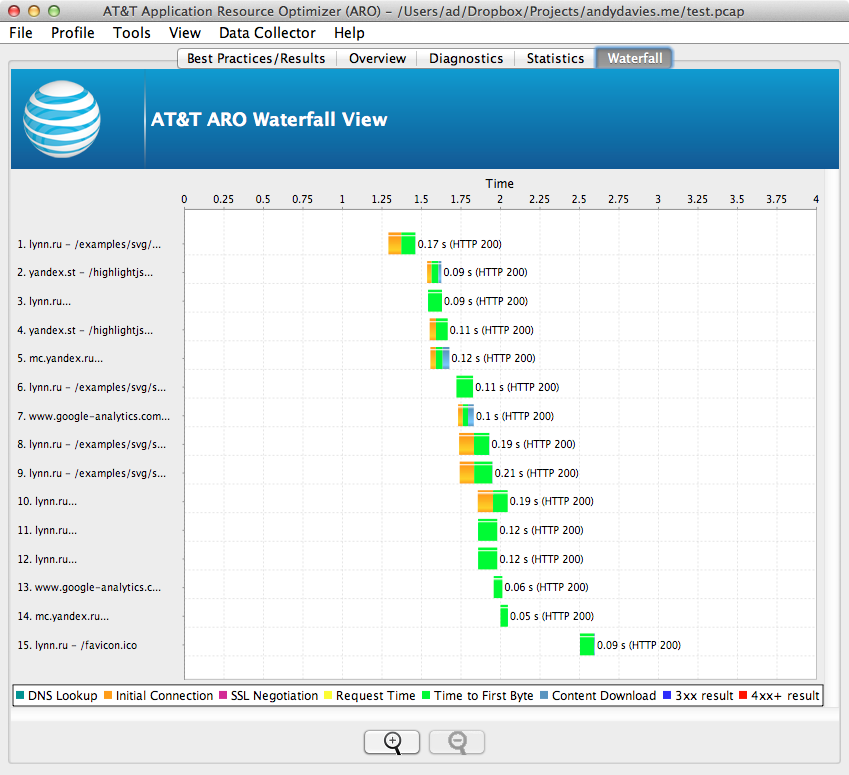

This partial waterfall from WebPageTest illustrates the costs for fetching and executing the script.

First there’s a 300ms delay while the browser connects to the third-party (cyan, orange and magenta segments), then the download of the script takes a further 1,100ms (beige segment), and then the script execution takes a further ~200ms (pink segments on the right)

The dark sections of the beige line are where data is being received, and the light section where there’s no data – these extended light sections are an indication that this tag is competing for the network or the server it’s hosted on is slow.

If the script is cacheable then the cost of the network connection and download should only affect the first time it’s loaded in a session but the cost of the execution time will apply to all pages that include it.

Tags can also trigger further downloads, sometimes these may be calls to an API, other times they may be adding extra scripts, stylesheets etc to the page.

Expanding the above example we can see it makes many further calls (the chart only shows a few) to an API (grey bars). These API calls are likely to be made on every page, and again the light areas in the grey bars indicate either network contention or a slow server.So

Tags are generally implemented as scripts, and a second aspect to consider is what effect they have on blocking HTML parsing.

By default script elements (such as the one below) stop the browser from parsing HTML until the script has been fetched and has finished running.

<script src="https://cdn.example.com/third-party-tag.js"></script>

We want to avoid implementations that use blocking tags as much as possible due to the delay they cause, which if the third-party isn’t reachable for some reason can be over 30 seconds.

There are a few ways to make scripts non-blocking.

Adding the async attribute tells the browser to not to wait while the script is fetched but will block the browser when the script executes.

<script src="https://cdn.example.com/third-party-tag.js" async></script>

Non-blocking scripts can also be added via a small inline script snippet that inserts another script into the page. This example is for Google Tag Manager, but it’s a very common pattern.

<script>

(function(w, d, s, l, i) {

w[l] = w[l] || [];

w[l].push({

'gtm.start': new Date().getTime(),

event: 'gtm.js'

});

var f = d.getElementsByTagName(s)[0],

j = d.createElement(s),

dl = l != 'dataLayer' ? '&l=' + l : '';

j.async = true;

j.src = 'https://www.googletagmanager.com/gtm.js?id=' + i + dl;

f.parentNode.insertBefore(j, f);

})(window, document, 'script', 'dataLayer', 'GTM-XXXX');

</script>

Another form of non-blocking scripts use the defer attribute to instruct the browser that it doesn’t need to wait for the script to download but that it should only execute the script when all the HTML has been parsed.

<script src="https://cdn.example.com/third-party-tag.js" defer></script>

Avoid document.write as it stalls the browser – the browser can’t discover the external script until document.write executes, and then the browser must wait for the script to be downloaded and run before it can carry on parsing the HTML.

document.write(‘<script src="https://cdn.example.com/third-party-tag.js"></script>’);

Tag Managers generally inject tags using a non-blocking approach but occasionally I come across one that still uses document.write.

Reducing the Impact of Tags

Our goal is to minimise the impact tags have on visitors’ experience, while still retaining the value those tags provide.

Catalogue the Tags that are Currently Deployed

There are a few ways to catalogue the tags on a page… from inspecting the contents of a tag manager container, through free tools like WebPageTest to commercial tools such as Ghostery and ObservePoint.

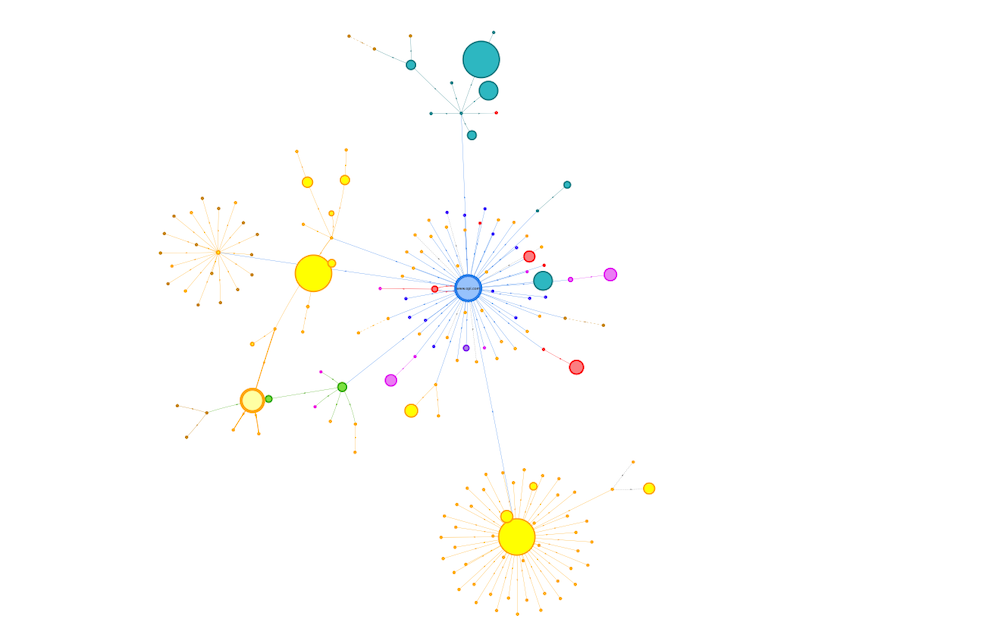

One of my favourite places to start is Simon Hearne’s Request Map – it’s built on top of WebPageTest and visualises the third-parties on a page along with details on their size, type and what triggered their load.

Request Map for OPI Product Page

Request Map for OPI Product Page

I often create these for different types of pages across a site and also combine the WebPageTest data to build a cross site view.

Consolidate by Identifying Tags that can be Removed

Once I’ve got an idea of what’s on the page I start analysing and asking questions.

Initially, I aim to identify services where the subscription has lapsed, no-one is using it, or where there’s more than one product providing similar features.

When a subscription expires, some providers helpfully return an error e.g. HTTP 403, others serve an empty script but many carry on serving their full script, so sometimes it can take a bit of digging to identify them.

A few years ago (pre-GDPR) one of the European airlines audited their tags and found that subscriptions had expired for around a third of the tags on their pages, and they couldn’t find anyone who used some of the others.

So immediately with a bit of tidying up they were able to reduce the impact.

Occasionally I come across tags from different vendors that provide similar features, for example session replay services like Mouseflow and HotJar, or analytics services such as Google and Adobe.

Reducing this duplication and consolidating on a single choice is better than having multiple solutions (for both cost and visitor experience) but sometimes there can be good reasons to keep more than one analytics service but try to deduplicate where possible,

Another question to ask at this point is whether the tag is actually needed on the current page, for example I’ve seen the Google Maps script included in every page across a site when there was only a map on one or two pages.

Reduce the Cost of Remaining Tags

When the unused tags have been removed and the duplicates consolidated I start exploring ways to reduce the impact of the remaining tags.

Initially I’ll identify which tags might be replaced with smaller, faster alternatives, and then how we can reduce the cost of the remaining ones.

Lighter Weight Alternatives

Typical wins are replacing the standard embedded YouTube player with a version that delays loading the player script until a visitor interacts with the video.

Or replacing social sharing buttons with lightweight JavaScript free versions or even removing them entirely and just relying on visitors using the sharing features built into their browser.

Switching providers in another option worth considering – one of my clients switched their chat provider from ZenDesk to Olark as it was half the size!

Experimentation Frameworks and Tag Managers

The impact of AB / MV Testing services and Tag Managers can often be reduced by simplifying their work.

The size of tags for testing services often depends on the number of experiments included, number of visitor cohorts, page URLs, sites etc. and reducing these can reduce both the download size and the time it takes to execute the script in the browser.

Out of data experiments or A/A tests that are being used as workarounds for CMS issues or development backlogs, and experiments for different sites (staging and live etc.) in the same tag are some of the aspects I look for first.

Similarly the more tags and rules there are in a tag container the larger it’s going to be, and so the greater its impact on the visitors experience.

A client I worked with last year was using a single container for each geographical region and it contained the tags for every brand site in that region. All these tags were being shipped to every visitor even when most of them wouldn’t be used and the size of the container had a noticeable impact on visitors' experience.

I encouraged the client to switch to one container per brand to reduce its size and improve visitor experience. The challenge for them was that this increased the number of tag containers they needed to manage – there’s always a complexity tradeoff somewhere!

Tracking Pixel and Server-Side Tag Management

Barry Pollard’s approach of replacing some tags with their fallback tracking pixel instead of using the full tag, is an interesting idea that I’ve not tried with any clients yet.

Server-side tag management helps in a similar way, as the tag manager collates the events and distributes them to other services without including the scripts from those services directly in the page.

Libraries from Public CDNs

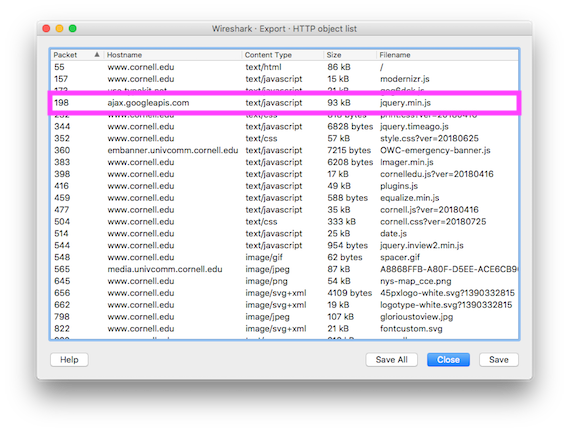

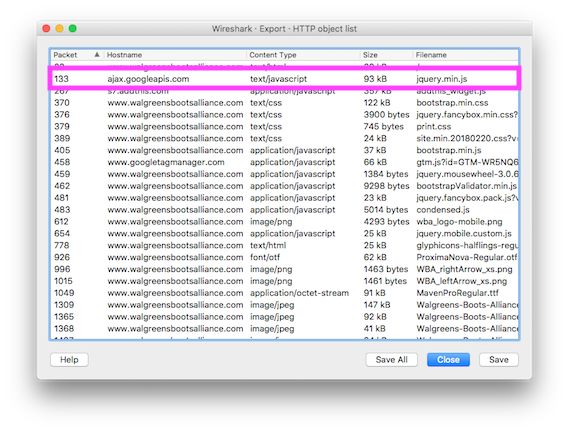

And although they’re not tags I also examine what 3rd-party resources – scripts, stylesheets and fonts such as jQuery, FontAwesome etc. – are being loaded from public CDNs such as jsdelivr or ajax.googleapis.com etc., with the aim of self-hosting them.

Self-hosting allows for more efficient use of network connections, especially if a site is already using a CDN and HTTP/2.

Choreograph when Tags Load

In the performance world we often refer to page load as a journey with milestones along the way… is anything happening, when does a page become useful or usable?

Third-party tags should fit into that journey…

Which ones must be loaded before the page can start displaying content to a visitor, which ones can be delayed until later, and what about the ‘bit in the middle’?

The point at which a tag needs to be loaded depends on what features it provides and when that feature is required.

But too often I see tag managers injecting tags as soon as possible.

Generally I try to delay the load of tags for as long as practical but it depends on the tag’s purpose – is it just collecting data for business use, does it affect or provide content and features that the visitor sees and when does the visitor need to see them?

Before Useful

Tags that are loaded early in the page have an outsized impact on visitor experience, often they’re included in the <head>, and browsers tend to prioritise resources included there.

The key question to answer is “does this tag need to be loaded before the visitor can see content, and what’s the impact if it’s loaded later?”

AB / MV Testing, Tag Managers, Personalisation tools and Analytics are some of the tags that are often loaded in this phase – I tend to leave them embedded in the page, but aim to have as few as possible and slim them down to minimise their impact.

Testing / experimentation tools often have a large impact in this phase.

They tend to take one of two approaches – block the page from rendering until the tag has loaded, or load non-blocking and hide the page using an anti-flicker snippet – and both of these have challenges.

Choosing a blocking approach stops the parsing of HTML until the script has downloaded and been executed.

With the non-blocking approach, anti-flicker scripts hide the page until either the testing framework has executed or a timeout value is exceeded (3 seconds in the case of this example for Adobe Target):

<script>

//prehiding snippet for Adobe Target with asynchronous Launch deployment

(function(g, b, d, f) {

(function(a, c, d) {

if(a) {

var e = b.createElement("style");

e.id = c;

e.innerHTML = d;a.appendChild(e)

}

})(b.getElementsByTagName("head")[0], "at-body-style", d);

setTimeout(function() {

var a=b.getElementsByTagName("head")[0];

if(a) {

var c = b.getElementById("at-body-style");

c && a.removeChild(c)

}

}, f)

})(window, document, "body {opacity: 0 !important}", 3E3);

</script>

I’m not a fan of snippets that hide the page – visitors are familiar with pages loading incrementally, and hiding the page interferes with their perception of speed.

Some will argue anti-flicker snippets avoid a poor visitor experience, and that if visitors see experiments making significant changes to the page it may alter their behaviour.

In the example above, even if the experimentation framework hasn’t finished its work the page is going to be revealed after 3 seconds, so visitors having slow experiences will potentially still see changes as they’re applied anyway.

I’d advise experimenting with whether you really need an anti-flicker snippet, reducing the timeout values, and also measuring the delay the anti-flicker snippet introduces (Simo Ahava has a post on how to do measure it for Google Optimize)

There are methods to reduce the impact of blocking testing frameworks too.

Casper removed the network connection time by self-hosted their Optimizely script and reduced the delay before content appeared by 1.7s

As an alternative to self-hosting, Optimizely provides instructions on how to proxy their tag through your own CDN, but proxying can bring security concerns, and you will need additional CDN configuration such as stripping the cookies you don’t want to forward to a third-party.

Testing frameworks are big bundles of JavaScript that need to be downloaded and executed so simplifying them will reduce their impact.

But ideally the work of large or blocking tags should be completed before the page reaches the visitor so explore how you can implement experiments server-side or CDN-side so they execute before the page is delivered.

Analytics / Attribution Fallbacks

One last thing to watch out for is fallbacks for visitors who have JavaScript disabled – many attribution tags use an image or iframe fallback wrapped in a noscript element.

The fallback for Bing Ads for attribution is one example:

<noscript>

<img src="https://bat.bing.com/action/0?ti=xxxxxxx&Ver=2" height="0" width="0" style="display:none; visibility: hidden;"/>

</noscript>

These fallbacks should be placed in the body of the page as img and iframe aren’t valid elements in the head.

After Usable

Some tags provide features that aren’t much use until a visitor can interact with the page – chat and feedback widgets, session replay services etc. – so I tend to delay their load.

Often these tags are loaded much earlier than needed and their download competes for the network often delaying far more important resources such as product images.

I’ll delay the addition of these types of tags using the Window Loaded Adobe Launch event / GTM trigger. Delaying them reduces competition for the network and allows the more important resources to complete sooner.

Between Useful and Usable

It’s often clear which tags need to be loaded early and which can be delayed but there’s a grey area between the page starting to render and the page becoming usable.

And as yet, I’ve not developed a clear approach on how to handle the tags that fit into this section.

Often I’m guided by whether the tag provides content the visitor sees, for example I’ll include the tag for a reviews service just before the point in the page where the reviews appear. Inserting it earlier than that may delay more important content, but adding it later can result in the page reflowing once that tag has loaded.

Tags that don’t provide content – analytics, attribution, remarketing etc. – are a bit more tricky.

A TagMan study from several years ago demonstrated that the later a tag was fired, the greater the risk of data loss as visitors abandoned the page before the tag had fired.

These types of tag are ideal candidates for server-side tag management where only one tag needs to be fired, and the server-side code can distribute out the data further (clean up PII etc on the way).

But overall, the faster a page is, the less data loss there’s going to be.

Cut Connection Delays

The last area I explore is whether some of the delays caused by creating new network connections can be reduced.

Preconnect Resource Hints are commonly added via a HTTP headers, or the directly in the page using link element:

<link rel=”preconnect” href=”https://www.example.com”>

By default browsers wait until they’re about to request a resource before they make a connection to a server (assuming one doesn’t already exist) and making this connection ahead of time can bring forward the download of a resource.

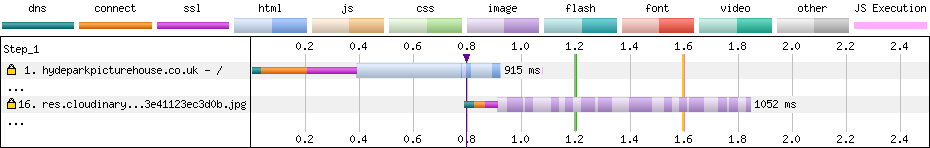

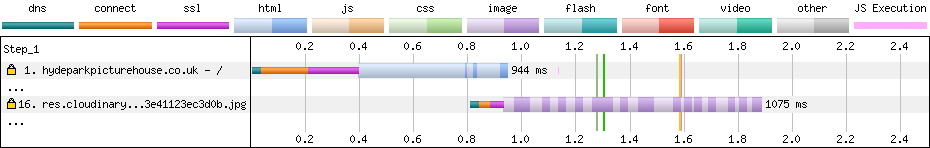

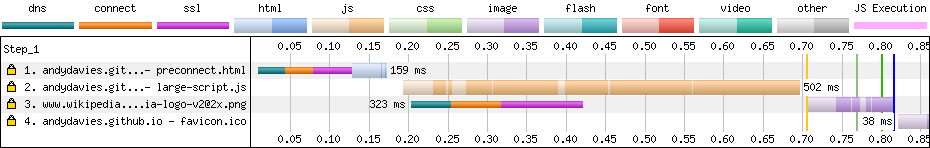

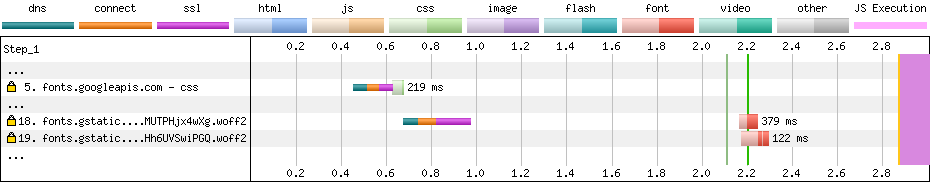

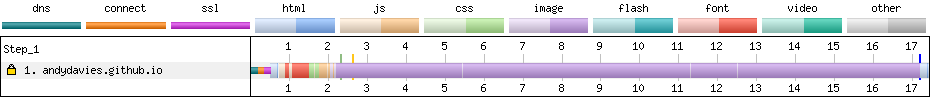

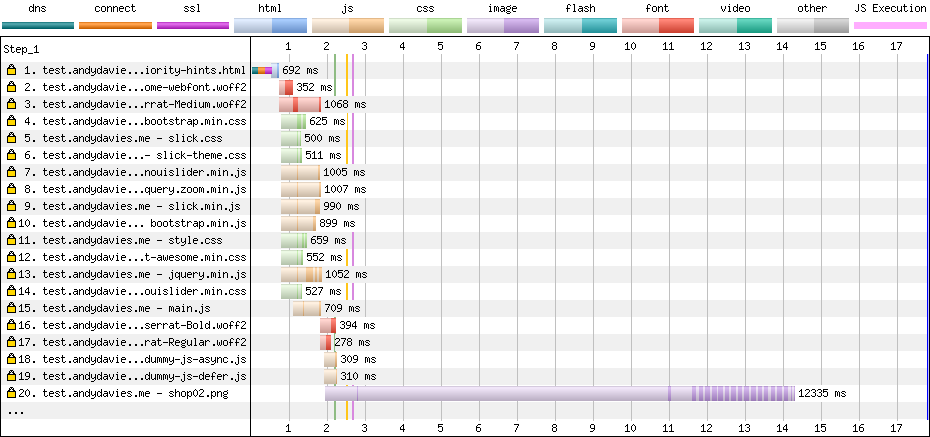

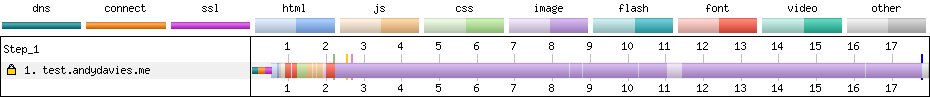

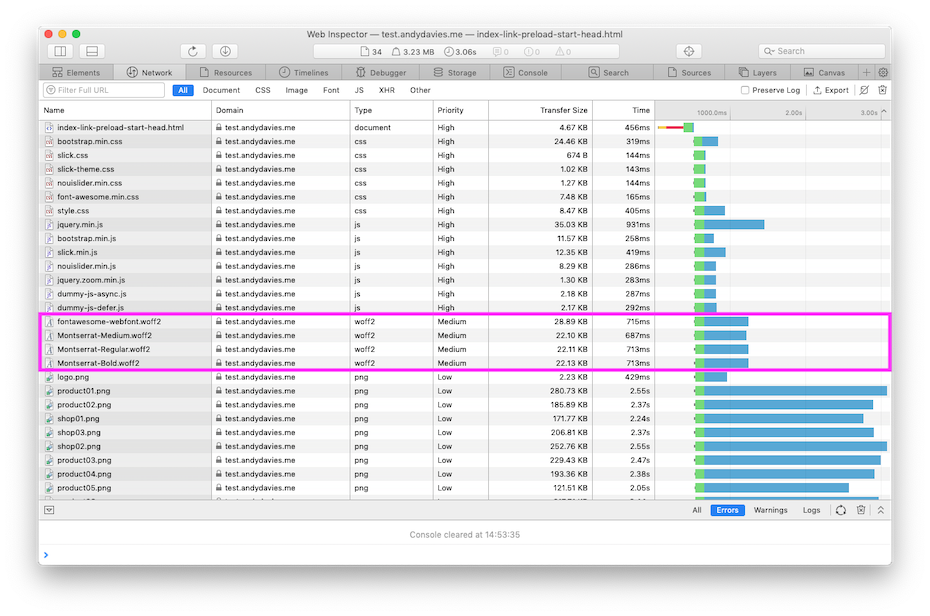

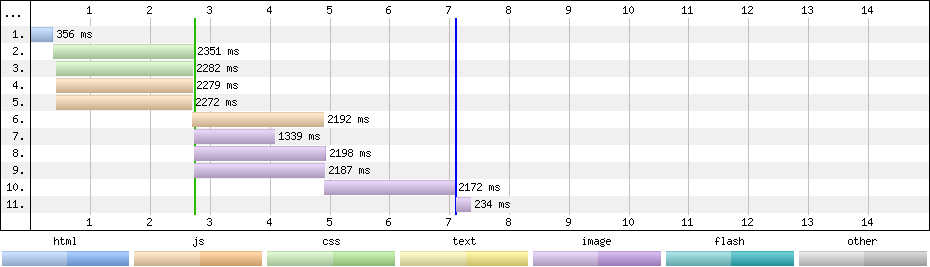

Without preconnect – image download starts at ~1.55s

Without preconnect – image download starts at ~1.55s

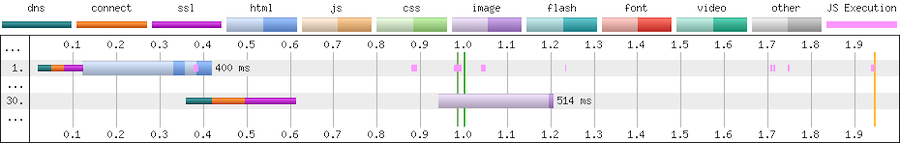

With preconnect – image download starts at ~0.95s

With preconnect – image download starts at ~0.95s

Preconnects are cheap but they’re not free (creating a HTTPS connection consumes bandwidth in the certificate exchange) so don’t overuse them.

For tags later in the page, you can use a tag manager to inject preconnect directives at an appropriate point – for example if a tag is being injected using the Window Loaded trigger, I’ll experiment with injecting the preconnect using DOM Ready trigger.

Not every domain needs a preconnect and if you find the need to preconnect to many domains then you’re probably using too many tags.

Taming Tags Delivers Wins

When he was at The Daily Telegraph, Gareth Clubb wrote about the approach they adopted and the experience they had reducing the impact of third-party tags.

Several years ago I was working with a UK fashion retailer, and we found that one of their 3rd-party tags was slowing down visitors who used Android phones by around four seconds. The retailer decided to disable this tag for those visitors and saw a 26% increase in revenue from them.

Encouraged by this early gain the retailer went on to make improvements right across their site and reduced the median load time for Android visitors from over 14 seconds to under 6.

What about OPI?

To see what gains OPI could make if they improved the implementation of their third-party tags I used a Cloudflare Worker as proxy to rewrite the page and tested the changes with WebPageTest.

Consolidating and choreographing just a few 3rd-party tags reduced the delay before the product image appeared by one second, and there’s still plenty of opportunity for further improvements to both the base page, and their tag implementation.

Summary

Although I’ve described a sequential process, in reality I adopt a ‘pick and mix’ approach.

Persuading clients to implement ‘quick wins’ such as replacing the YouTube player, or delaying the load of chat and feedback widgets early on in an engagement is a great way of kickstarting an overall performance improvement process.

And like many things performance related, even small incremental improvements soon add up to make a larger difference.

Next time you’re thinking about the impact third-party tags are having on site-speed keep these five principles in mind:

- Catalogue the tags that are being served to your visitors

- Consolidate to remove expired and unused tags, reduce duplication and ensure tags are only included on the pages they are used on

- Reduce the cost of tags by adopting lightweight alternatives, slimming down testing frameworks and Tag Managers. Self-host libraries instead of fetching them from public CDNs.

- Choreograph when tags are loaded so that the most important content gets shown to your visitors sooner

- Cut delays caused by connecting to tag domains

They’re not an exhaustive list of all the things you should consider when managing tags but they’ll help you move in the right direction.

And if you’d like help taming your third-party tags, or generally improving the speed of your site feel free to Get In Touch.

Further Reading

Reducing the Speed Impact of Third-Party Tags (slides)

Measuring the Impact of 3rd-Party Tags With WebPageTest

Adding controls to Google Tag Manager, Barry Pollard

Exploring Site Speed Optimisations With WebPageTest and Cloudflare Workers

Fast Fashion… How Missguided revolutionised their approach to site performance

Google Optimize Anti-flicker Snippet Delay Test, Simo Ahava

How we shaved 1.7 seconds off casper.com by self-hosting Optimizely

Content Delivery Networks (CDNs) and Optimizely

Self-hosting third-party resources: the good, the bad and the ugly

Improving third-party web performance at The Telegraph

]]>And too often that can be a hard question to answer…

I can be pretty sure of the 'direction of travel' – shrinking resources should make them download faster, delaying 3rd-parties should make content appear sooner – but page load can be non-deterministic and un-sharding domains, re-ordering resources or other changes sometimes leads to unexpected results.

Knowledge, experience and lots of testing can help us to prioritise what we think are the appropriate optimisations but often we have to wait until those changes make it to staging (or even live) before we can check the results.

WebPageTest and DevTools can give us clues that we're heading in the right direction but there's a gap that neither of them quite fill – a reliable testing environment that allows us to experiment and make changes to the page being tested.

When we worked together, Simon Hearne prototyped a proxy using mod_pagespeed that optimised pages and illustrated potential performance gains to customers (and accidentally siphoned away a UK airline's search traffic) but it's optimisations were limited and it wasn't easy to use.

So, last year when Pat Meenan, and Andrew Galloni started demonstrating what was possible using Cloudflare Workers as a proxy I guessed it might be a solution to fill the gap.

But it's taken me a little while to get around to experimenting with them...

Cloudflare Workers

Service Workers are often described as a programmable proxy in the browser – they can intercept and rewrite requests and responses, cache and synthesise responses, and much more.

Cloudflare Workers are a similar concept but instead of running in the browser they run on CDN edge nodes.

In addition to intercepting network requests, there's a HTMLRewriter class that targets DOM nodes using CSS selectors and triggers a handler when there's a match. The handlers can alter the matched elements, for example changing attributes, or even replacing an elements contents.

Andrew Galloni's post – Prototyping optimizations with Cloudflare Workers and WebPageTest – for the 2019 Performance Advent Calendar gives a good overview and guide to get started with them.

How I'm Using Them

Key to the approach I'm using is WebPageTest's overrideHost script command. It allows requests to one domain to be rewritten to another, and sets an x-host HTTP header on the revised request.

In the example script below any requests to example.com are rewritten to demo-proxy.asteno.workers.dev and the x-Host header set to example.com for those requests.

overrideHost www.example.com demo-proxy.asteno.workers.dev

navigate https://example.com/test-page.htmlI start with a simple boilerplate worker and as the transforms tend to be bespoke for each site, I create a separate worker for each site I'm testing.

The boilerplate script for the worker follows this pattern:

- serves a robots.txt that disallows crawlers

- returns an error if the

x-hostheader is missing - if the request is for a predefined site, the browser is expecting a HTML response and the

x-bypass-transformheader isn't set totruethe proxy uses a HTMLRewriter to modify the response - Otherwise just proxy the request

/* Started from Pat's example in https://www.slideshare.net/patrickmeenan/getting-the-most-out-of-webpagetest */

/*

* TODO

* Add mimetype to robots.txt

* Add a better doc check, perhaps use a header instead?

*/

const site = 'www.example.com';

addEventListener('fetch', event => {

event.respondWith(handleRequest(event.request))

});

async function handleRequest(request) {

const url = new URL(request.url);

// Disallow crawlers

if(url.pathname === "/robots.txt") {

return new Response('User-agent: *\nDisallow: /', {status: 200});

}

// When overrideHost is used in a script, WPT sets x-host to original host i.e. site we want to proxy

const host = request.headers.get('x-host');

// Error if x-host header missing

if(!host) {

return new Response('x-host header missing', {status: 403});

}

url.hostname = host;

const bypassTransform = request.headers.get('x-bypass-transform');

const acceptHeader = request.headers.get('accept');

// If it's the original document, and we don't want to bypass the rewrite of HTML

// TODO will also select sub-documents e.g. iframes, from the same site :-(

if(host === site &&

(acceptHeader && acceptHeader.indexOf('text/html') >= 0) &&

(!bypassTransform || (bypassTransform && bypassTransform.indexOf('true') === -1))) {

const response = await fetch(url.toString(), request)

return new HTMLRewriter()

.on('selector', new exampleElementHandler())

.transform(response)

}

// Otherwise just proxy the request

return fetch(url.toString(), request)

}

/*

*

*/

class exampleElementHandler {

element(element) {

// Do something

}

}

Example Transforms

The transforms I'm using are fairly straightforward and mainly consist of unsharding domains, changing the order of the page, or delaying when a resource loads.

Sometimes it's possible to manipulate an existing element in the page, sometimes an element has to be deleted and a replacement inserted elsewhere in the page.

- Unsharding Domains

Requesting frameworks, libraries etc from 3rd-party CDNs such as cdnjs, jsdelivr etc. is still very common across many of the customers I work with.

Requesting these from another origin involves creating a new connection, and then as HTTP/2 prioritisation only works across a single connection they may compete for the network with other resources.

One of the first tests I try is directing these requests through the proxy, so they're on the same origin as the page too:

overrideHost www.example.com demo-proxy.asteno.workers.dev

overrideHost ajax.googleapis.com demo-proxy.asteno.workers.dev

navigate https://example.com/test-page.html)

The proxy could be improved to cache these libraries on Cloudflare to remove the request origin for them – one of Pat Meenan's workers has an example of how to do this.

- Deferring inline scripts

Clients often use 3rd-party services that don't need to be loaded until the visitor has a usable page – sometimes these provide outward facing features such as chat or feedback widgets, other times they may be internal facing, session replay for example.

I'll often defer the load for these types of services by moving them into a Tag Manager, and initiating their insertion using the Window.Loaded trigger in Google Tag Manager (GTM).

In one recent example, HotJar was loaded via an async snippet at the start of the head:

(function(h,o,t,j,a,r){

h.hj=h.hj||function(){(h.hj.q=h.hj.q||[]).push(arguments)};

h._hjSettings={hjid:xxxxxx,hjsv:x};

a=o.getElementsByTagName('head')[0];

r=o.createElement('script');r.async=1;

r.src=t+h._hjSettings.hjid+j+h._hjSettings.hjsv;

a.appendChild(r);

})(window,document,'https://static.hotjar.com/c/hotjar-','.js?sv=');

To delay HotJar loading and simulate it being implemented via GTM I wrapped the HotJar snippet with a native event handler for window onload.

class deferInlineScript {

element(element) {

const wrapperStart = "window.addEventListener('load', function() {";

const wrapperEnd ="});";

element.prepend(wrapperStart, {html: true});

element.append(wrapperEnd, {html: true});

}

}

- Moving Third-Party Tags

Qubit's SmartServe is quite a large tag and even when loaded async competes for network bandwidth and CPU time in ways that impact performance.

One site I tested implemented the SmartServe tag near the top of the <head>, before any stylesheets.

<script src='//static.goqubit.com/smartserve-xxxx.js' async defer></script>

Its fetch was initiated soon after the page started loading and was competing with higher priority render blocking resources so I wanted to move the element to much later in the <head>.

This type of change becomes a two stage process where one handler removes the script element and then a second reinserts it (just before the end of the head).

.on('script[src="//static.goqubit.com/smartserve-xxxx.js"]', new removeSmartServe())

.on('head', new reinsertSmartServe())

class removeSmartServe {

element(element) {

element.remove();

}

}

class reinsertSmartServe {

element(element) {

var text = '<script src="//static.goqubit.com/smartserve-xxxx.js" async defer></script>';

element.append(text, {html: true});

}

}

Testing

In initial testing I tend to start with host overrides in WebPageTest, then switch to curl or a browser when developing the HTML rewriting script, and finally switching back to WebPageTest to check before and after comparisons.

It's also an iterative process where I'll make a some initial changes, test and refine until I'm happy with their impact and then start around the loop again.

- curl

To test the HTML rewriting using curl both the x-host, and accept headers need to be set appropriately.

curl -H "x-host: www.example.com" -H "accept: text/html" https://demo-proxy.asteno.workers.dev/test-page.html

Piping curl's output to a file or util like less makes it easier to read.

- Browser

For in-browser testing of HTML rewriting I've been using Chrome, setting the x-host header with the ModHeader Extension and then loading the page via the proxy i.e. https://demo-proxy.asteno.workers.dev/test-page.html

This approach only allows the initial host to be overridden, so can't be used to unshard domains.

- WebPageTest

Finally when I'm happy with the host overrides and HTML rewrites I switch back to WebPageTest and generate before (baseline) and after tests.

I've found that some sites get faster when proxied through Cloudflare's network, so I still used the proxy when I'm generating a baseline for comparison but set the x-bypass-transform header to true so the HTML transforms aren't applied.

setHeader x-bypass-transform: true

Gotchas

A few issues have tripped me up while I was writing and testing proxies:

- overrideHost and Service Workers

WebPageTest's overrideHost command doesn't seem to work with requests dispatched from a Service Worker and the request always seems to default back to the original host.

Reading the code and talking to Pat, it appears it should but I've not had time to debug this issue further yet.

- overrideHost and non-Chromium browsers

I could only get overrideHost to work in Chromium based browsers – Chrome, Mobile Chrome and Edge.

- Fragile Selectors

When rewriting the HTML, I sometimes have to rely on fragile DOM queries, for example this selector to target the first script element in the head: head > script:nth-of-type(1).

And as there's currently no way to extract the contents of an element I can't test that the element that's been passed to the handler is the one I wanted to target.

Specific selectors for example, that use an id, or src attribute etc., are more robust.

- Differing DOMs

The DOM that HTMLRewriter is operating on is not the same DOM as viewed in the Elements tab in DevTools as the rewriter doesn't execute scripts, so by default the DOM queries can't be tested in the browser.

Using DevTools to block all requests except the one for the source HTML document and then checking the queries from the console is one way around this.

Closing Thoughts

Even though I've only used the combination WebPageTest and Cloudflare Workers with a few sites, it's clear that it's a powerful combination and it's likely to become a regular part of my client workflow.

At BrightonSEO I'm talking about Reducing the Speed Impact of Third-Party Tags and as much as I can talk about the theory, nothing beats a good demo.

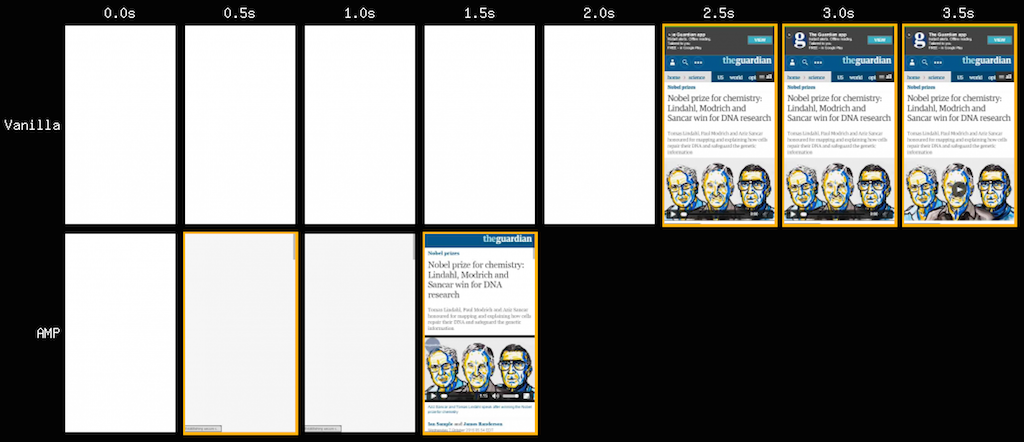

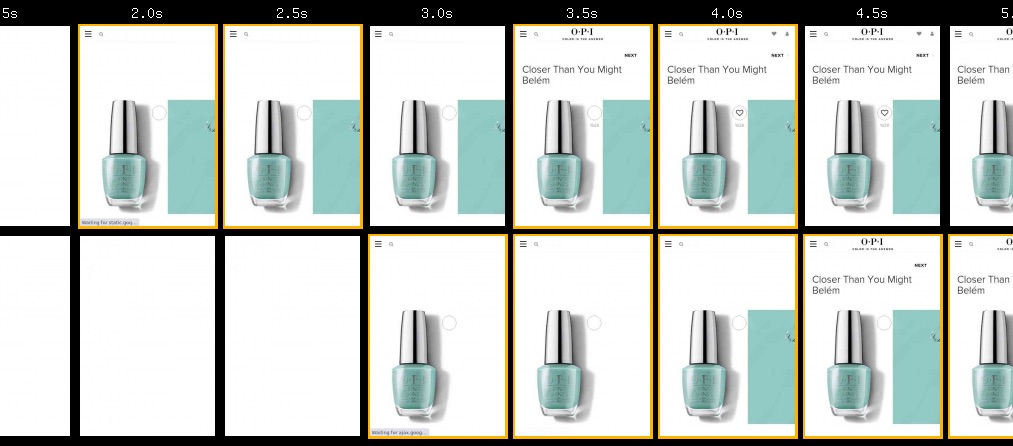

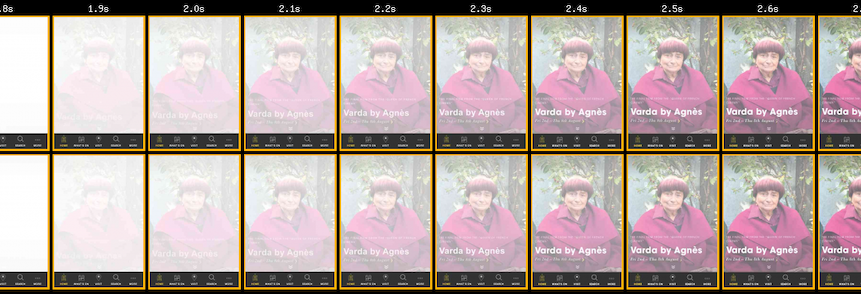

For my demo I used a worker to re-write parts of the page and choreograph how 3rd-party tags were loaded. The changes improved Largest Contentful Paint by a second for OPI's product page (top row).

The filmstrip is for an uncached view of the page, and although there's still plenty of room for improvement in the initial render time, it illustrates how a proxy can be used to quickly evaluate changes before committing them to the development lifecycle.

There's plenty of other optimisations to try… from replacing an embedded YouTube player with a lazy-loaded version or adding the lazy-loading attribute to out of viewport images, through to using Cloudflare's image optimisation, and text compression features to reduce payload sizes.

A few clients ask me to evaluate the performance impact of 3rd-party tags before they implement them. As part of this process I typically query the HTTP Archive to find another site that uses the same tag and then test that site with and without the tag. Using a proxy I could inject the tag into the client's site and see what impact it has.

As yet, I've not got as far as rewriting or replacing external scripts and stylesheets, or exploring how Cloudflare's cache and key-value store can be used in the testing process.

But if you'd like some more sophisticated examples of the types of optimisations that can be implemented using Cloudflare's Workers, Pat Meenan has a collection of examples on GitHub.

Further Reading

Prototyping optimizations with Cloudflare Workers and WebPageTest, Andrew Galloni, Dec 2019

Pat Meenan's collection of Cloudflare Workers

Cloudflare Workers documentation

]]>The Prefetch Resource Hint allows us to tell the browser about resources we expect to be used in the near future, so they can be fetched ready for the next navigation.

Several of my clients have implemented Prefetch – some are inserting the markup server-side when the page is generated, and others injecting it dynamically in the browser using Instant Page or similar.

A while back I noticed Chrome was making requests for prefetched resources much earlier than I expected and in some cases the prefetched resources were competing with other more important resources for the network.

As the specification makes clear this is something we want to avoid:

Resource fetches required for the next navigation SHOULD have lower relative priority and SHOULD NOT block or interfere with resource fetches required by the current navigation context.

So how do browsers behave and what are the implications of which server is in use?

Test Case

The tests in this post are based on a modified version of the Electro ecommerce template from ColorLib with the following prefetch declarations added in the <head> of the document:

<link rel="prefetch" href="https://www.wikipedia.org/img/Wikipedia-logo-v2.png" as="image" />

<link rel="prefetch" href="dummy-subresources/styles.css" as="style" />

<link rel="prefetch" href="dummy-subresources/scripts.js" as="script" />

<link rel="prefetch" href="dummy-subresources/image.jpg" as="image" />

The collection of test pages I used is available on Github.

All the browsers tested – Firefox, Chrome, new Edge and Safari – issue prefetch requests with a low priority, but when the requests are dispatched varies between browsers.

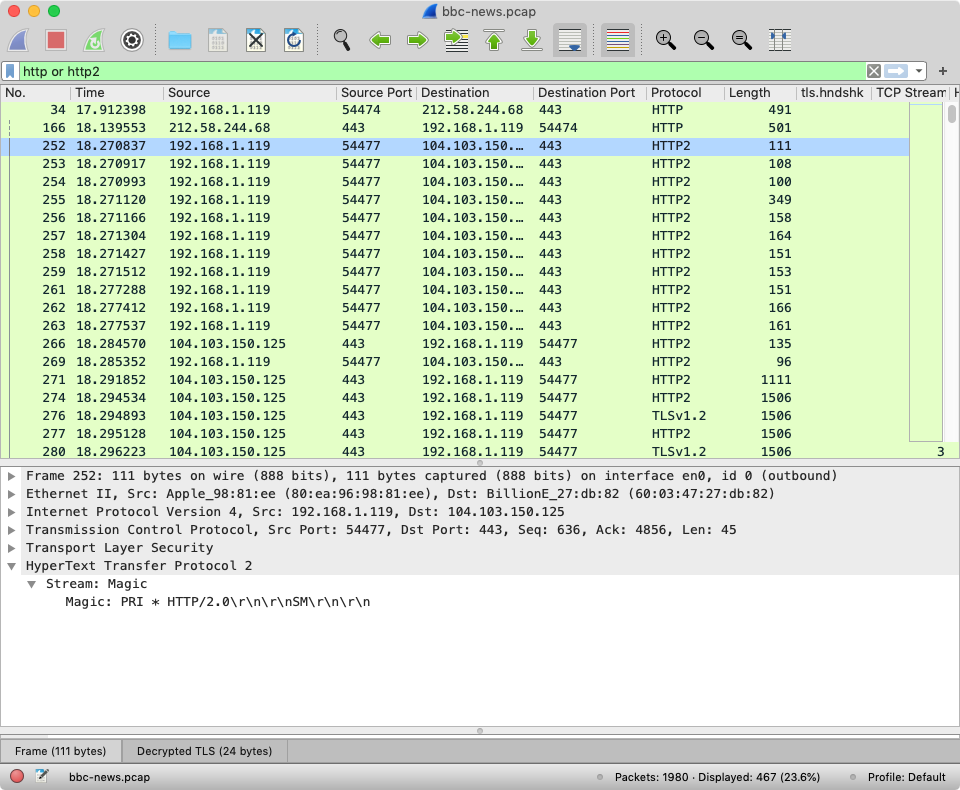

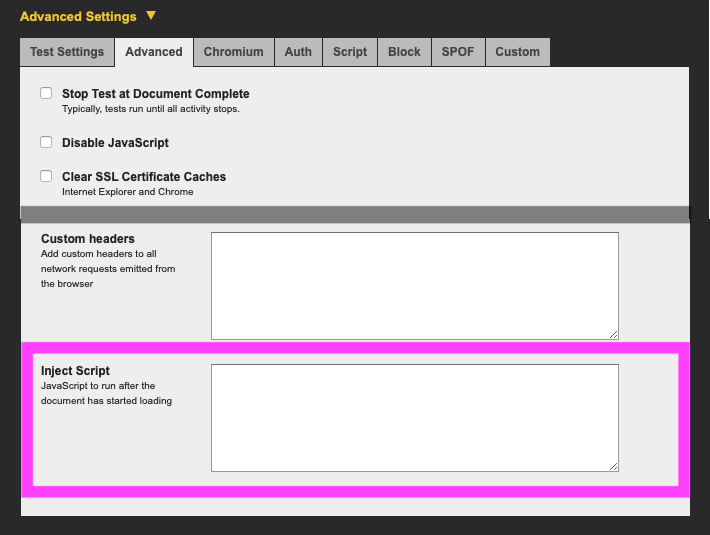

Chrome, new Edge and Safari dispatch the prefetch requests sooner than Firefox and rely on HTTP/2 prioritisation to schedule the requests appropriately against other resources.

As support for HTTP/2 prioritisation varies from very good to non-existent depending on the server, this approach can lead to prefetched resources competing for network capacity.

Servers with Good HTTP/2 Prioritisation

The first set of examples are served using h2o, a server that’s known to support effective HTTP/2 prioritisation, running on a $5/month Digital Ocean droplet.

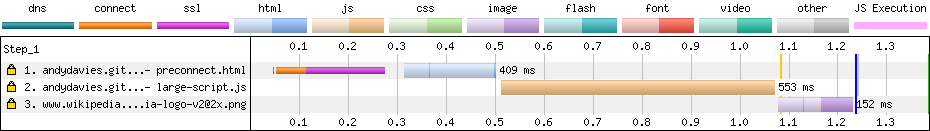

- Firefox

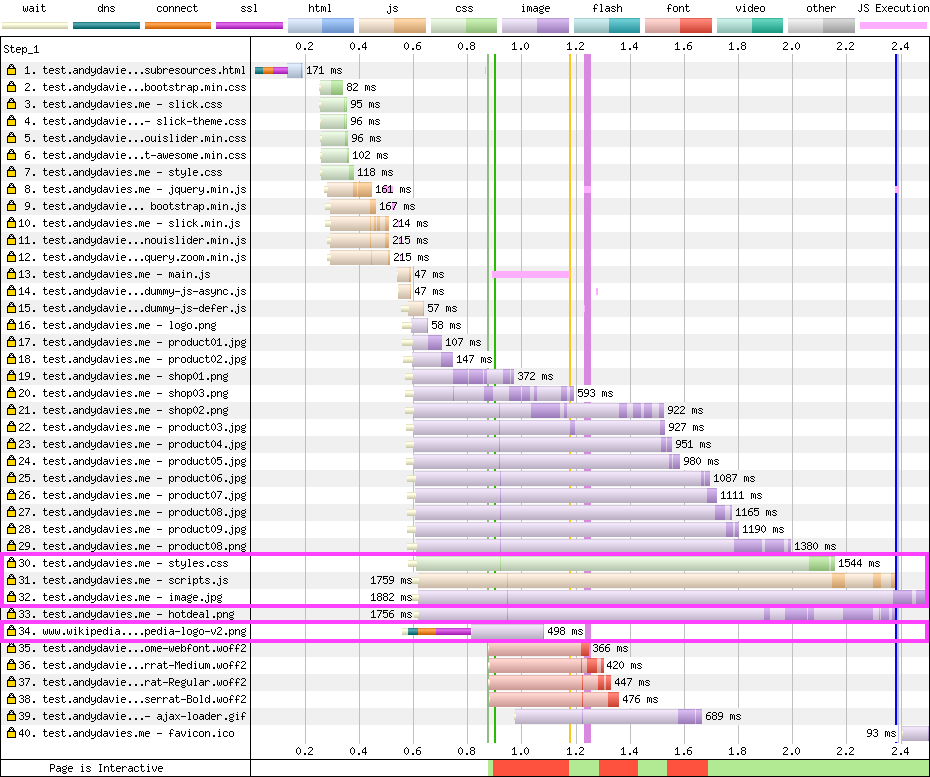

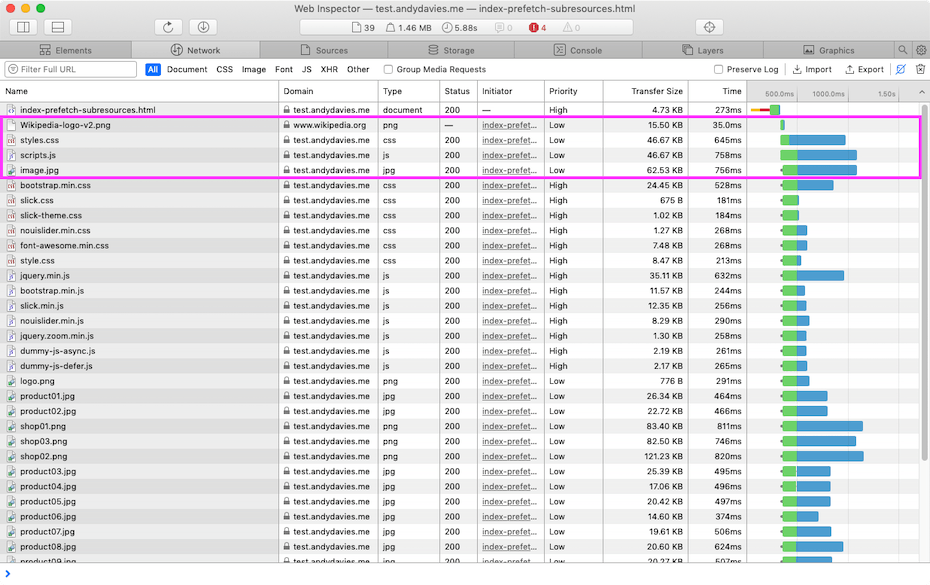

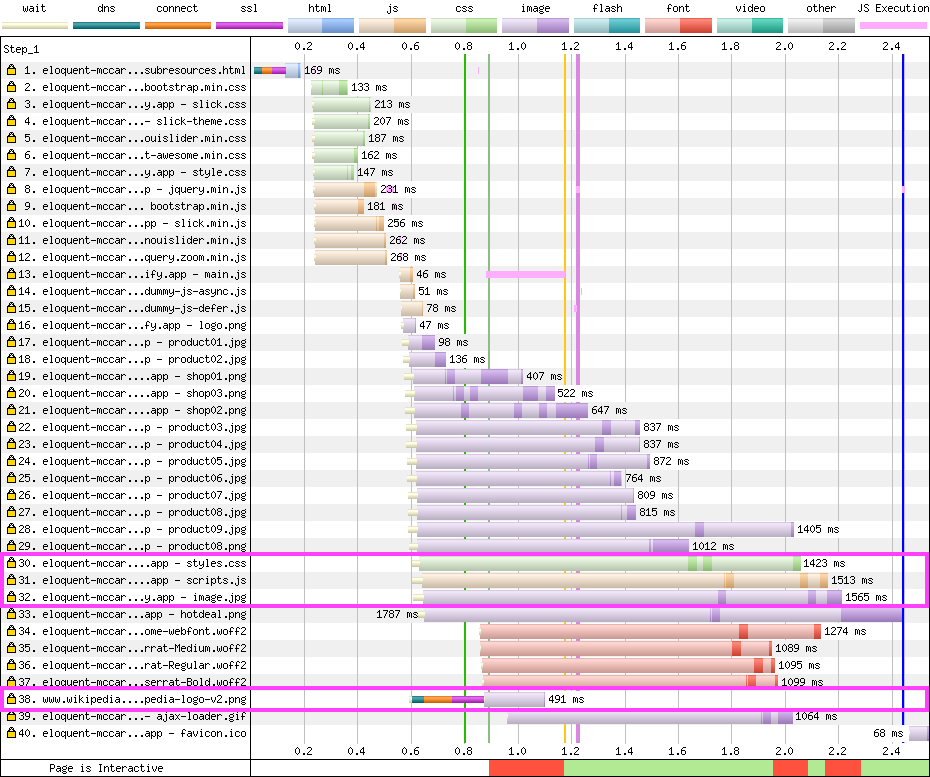

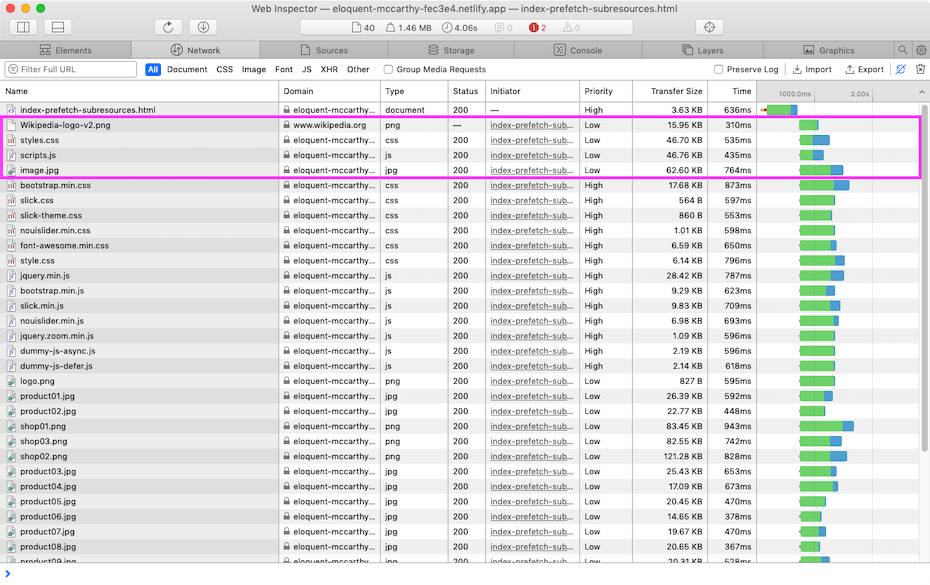

Firefox delays issuing the prefetch requests (37, 38, 39, 40) until the network is quiet. In the example below they’re actually dispatched after the load event but I’ve also seen them dispatched in the middle of page load when the network was quiet.

Resource Hints in head of Page - h2o tested with Firefox / Dulles / Cable

Resource Hints in head of Page - h2o tested with Firefox / Dulles / Cable

- Chrome and Edge

Chrome schedules the requests for prefetched resources alongside the those referenced in the body of the document, as part of it’s ‘second stage load’.

It delays dispatching the prefetch requests (30, 31, 32, 34) until after the other resources referenced in markup but the prefetch requests are still made before those for ‘late-discovered’ resources discovered, such as background images, and fonts.

The server correctly delays the responses for resources prefetched from the test page origin until the other higher priority resources have been served.